Introduction

In today's distributed software world, performance is defined by the path users take to reach your services. Multi-cloud deployments - spanning AWS, GCP, Azure, and regional clouds - create opportunities for resilience but also introduce routing complexity that can heighten latency, reduce uptime, and complicate operational visibility. Traditional, single-cloud network designs seldom address the dynamic nature of cross-cloud traffic, where a suboptimal route can add tens or hundreds of milliseconds to critical API calls and user experiences. The objective of cloud routing optimization is to align network paths with business goals: minimize latency, maximize availability, and simplify governance across heterogeneous environments. This article lays out a practical, editorially grounded view of how traffic engineering and routing choices impact real-world performance, and how to implement them without blowing up your ops bill. RDAP & WHOIS database is one of several observability sources teams use to correlate routing signals with domain-level health signals and traffic sources.

Why cloud routing optimization matters in multi-cloud environments

Latency is not a fixed property of a single network, it emerges from routing decisions, peering relationships, and the location of downstream resources. In multi-cloud contexts, the same user might reach different clouds or edge locations depending on where traffic is steered in real time. Modern routing approaches - grounded in BGP optimization, Anycast concepts, and DNS-based steering - allow operators to influence the path that traffic takes across clouds and geographies. Industry best practices emphasize the ability to re-route traffic quickly in response to congestion, outages, or changing load patterns. For example, latency-based routing in public DNS services lets you direct users to the lowest-latency region or cloud resource, while health checks tie routing to real-time service availability. This combination is central to reducing perceived latency and improving fault tolerance.

(docs.aws.amazon.com)Key techniques for reducing latency in multi-cloud networks

Anycast routing and intelligent DNS resolution

Anycast routing advertises a single IP address across multiple locations, so client requests are directed to the closest or best-performing node. This approach is particularly potent when combined with DNS and content delivery networks, because it lets edge networks respond from the nearest PoP and adapt quickly to changing network conditions. The Cloudflare Anycast DNS overview explains how proximity-based routing can reduce resolution and connection times while increasing resilience against certain attack vectors. In practice, anycast can compress latency for end users and help isolate regional outages from global traffic.

See Cloudflare: What is Anycast DNS? for a formal explanation of the approach and its benefits. (cloudflare.com)

BGP-based traffic engineering and policy-driven routing

Border Gateway Protocol (BGP) remains the backbone of inter-domain routing. Smart BGP optimization - whether inbound, outbound, or peering- oriented - lets operators influence which paths traffic prefers, reducing congestion and improving stability in multi-cloud networks. Techniques like Ingress/Egress Traffic Engineering (TE), and policy-based routing enable shaping traffic to favorable peers and routes, rather than accepting default best-path behavior. Cisco's PfR (Performance Routing) documentation highlights inbound optimization as a practical way to steer traffic to the most favorable paths, while Juniper's guidance on Egress Traffic Engineering demonstrates how service providers can use segment routing and BGP attributes to balance load across peering links. Taken together, these capabilities translate into lower latency for cross-cloud workloads and more predictable performance. Trade-offs include management complexity and the need for continuous monitoring to maintain converge-time guarantees.

Key sources: Cisco: Network Optimization & High Availability, Juniper: BGP Egress Traffic Engineering. (cisco.com)

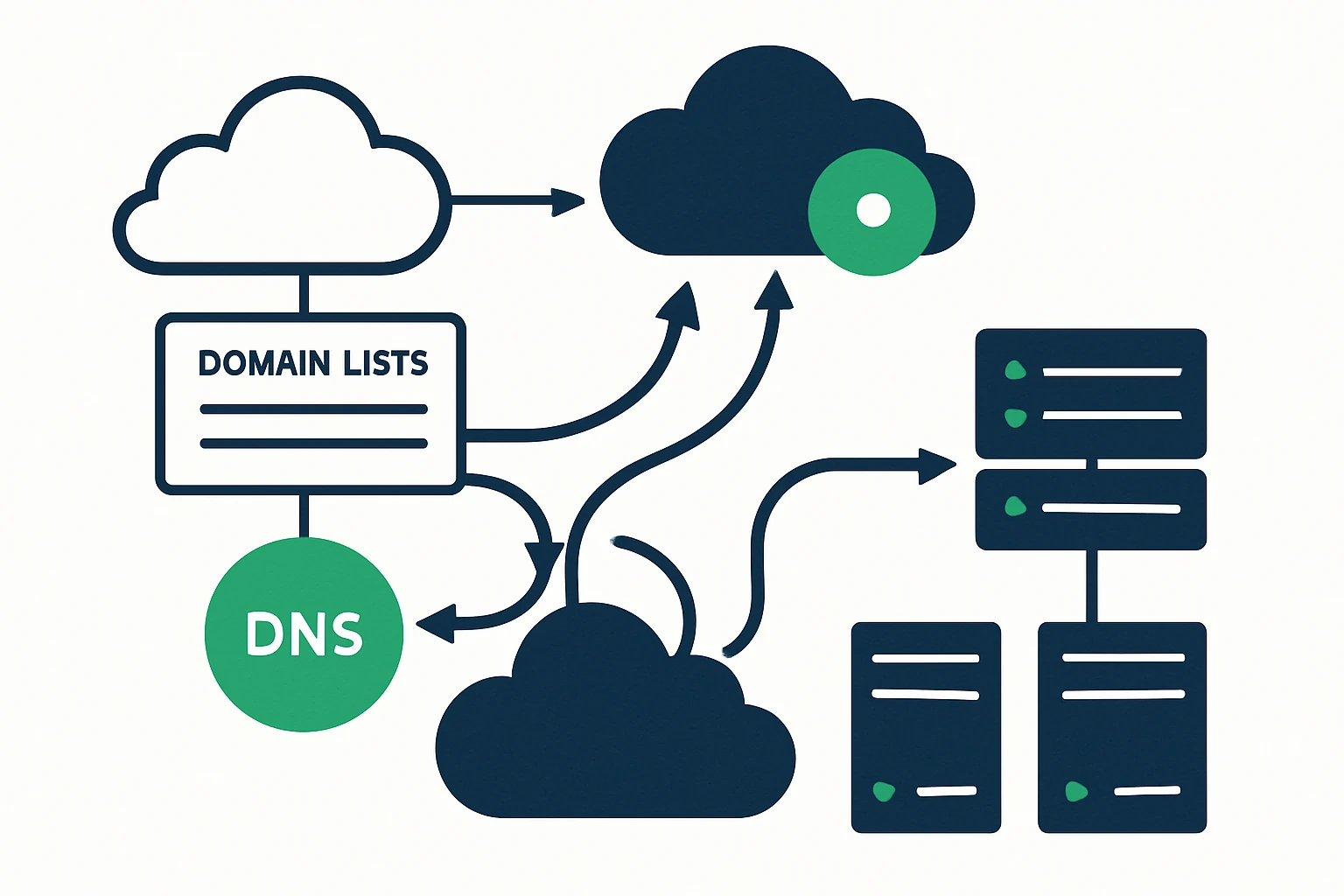

DNS-based routing and cross-region failover

DNS-based steering, when paired with health checks, provides a resilient, low-overhead mechanism to direct traffic toward healthy clouds or regions. Latency-based DNS routing uses measured regional latencies to return the best-performing endpoint, while DNS failover enables automatic rerouting in the face of outages. AWS Route 53 documentation details latency-based routing and the breadth of routing types (geo, geoproximity, weighted, etc.), including how to combine these with DNS failover for low-latency, fault-tolerant architectures. For private and hybrid deployments, geolocation and latency routing within Route 53 Private DNS further extend these capabilities into internal networks. Real-world guidance and white papers from AWS demonstrate how cross-region DNS-based load balancing can be a foundational element of multi-cloud resilience. Observability and timely health signals are critical, stale health data can lead to suboptimal routing decisions.

See AWS: Latency-based routing and AWS: Cross-Region DNS-based load balancing and failover for canonical guidance. (docs.aws.amazon.com)

Observability and data sources for informed routing decisions

Effective traffic engineering hinges on timely, trustworthy data. In practice, teams combine active measurements (latency, jitter, packet loss, traceroutes) with control-plane signals (BGP updates, peering changes) and game-theory style decision models to decide where to send traffic. Domain data from RDAP and WHOIS feeds can help correlate routing decisions with domain ownership, transfer status, and registration details - supporting anomaly detection and post-incident analysis. For readers who want a concrete data source, the RDAP & WHOIS database provides real-time metadata that can enrich traffic telemetry and domain health signals during incident response or capacity planning.

A practical framework for implementing traffic engineering in multi-cloud deployments

Operationalizing cloud routing optimization benefits from a structured approach that balances speed, safety, and cost. The following framework offers a pragmatic, vendor-agnostic path from discovery to execution:

| Step | What to Do | Key Metrics | Tools / Signals |

|---|---|---|---|

| 1. Map the topology | Inventory clouds, regions, edges, and peering relationships, map control planes and data planes across all providers. | Throughput by region, end-user latency distribution, regional outages | Cloud provider dashboards, BGP peerings, traceroute data, WAN links |

| 2. Measure and baseline | Collect real-time latency measurements, RTTs, and health signals from end users to establish a baseline. | Median/90th percentile latency, packet loss rate, regional availability | Latency dashboards, synthetic tests, Route 53 health checks, cloud-native monitors |

| 3. Model and simulate | Create lightweight models of routing options (anycast vs. unicast, BGP path choices) and simulate failure scenarios. | Projected latency improvement, failover time, path stability | TE simulations, controlled experiments, BGP policy modeling |

| 4. Execute with guardrails | Incrementally deploy routing changes with monitoring, rollback plans, and clear ownership. | Convergence time, error rate post-change, MTTR | Change management processes, health-check templates, automated rollbacks |

In practice, this framework helps teams determine whether to lean into anycast-based edge routing, rely on BGP-based TE to optimize cross-peering, or combine both with a DNS-based steering layer. A structured, data-driven approach reduces the guesswork that so often accompanies multi-cloud networking initiatives. For organizations evaluating data sources, it can be helpful to explore a broad spectrum of signals and tie them to user-perceived performance, latency budgets, and reliability goals. List of domains in .com TLD can serve as a real-world anchor for understanding how global traffic patterns relate to TLD distribution and end-user geography.

Limitations, trade-offs, and common mistakes

- Complexity and operational overhead: Advanced TE, BGP policy management, and anycast deployments require specialized skills and robust change control. Garbage-in/garbage-out telemetry can amplify misconfigurations if alerting and rollback plans are not baked in. This is a common source of latency regressions after a change.

- Data freshness and convergence: Routing decisions rely on timely signals. Stale health data or slow convergence can cause traffic to linger on suboptimal paths during outages (graceful restart and fast re-convergence are vital). See best-practice guidance from Cisco on maintaining route stability during failures. (cloud.google.com)

- TTL and DNS caching: DNS-based steering is powerful, but TTL settings and client DNS resolver behavior can slow or delay failover. AWS documentation emphasizes the need to coordinate health checks with DNS TTLs to avoid prolonged misrouting. (docs.aws.amazon.com)

- Anycast limitations: While Anycast reduces latency for many users, it may not uniformly improve every region due to BGP path selection dynamics. Organizations should validate anycast deployments with synthetic tests and region-specific analysis.

Putting it into practice: a practical scenario

Consider a software-as-a-service platform serving millions of users across the United States and Europe, with microservices deployed in AWS and Google Cloud. The goal is to minimize end-user latency while maintaining high availability during regional outages. A practical path might include:

- Deploy latency-based DNS routing for primary services, coupled with DNS failover to automatically reroute to healthy regions when a region's responsiveness drops below a defined threshold. This leverages the capabilities described by AWS Route 53 and its cross-region failover guidance.

- Establish BGP-based TE with strong peering policies to distribute outbound traffic to optimal provider edges and reduce egress bottlenecks. This includes egress routing optimization to balance inter-provider traffic and minimize congested paths, as described in Juniper’s TE guidance.

- Incorporate Anycast at the edge for critical DNS and API endpoints to shorten response paths for the majority of users and improve regional resilience. Cloudflare’s Anycast DNS overview provides the rationale and practical considerations for this approach.

- Maintain continuous observability with real-time latency and health dashboards, and use domain data sets (e.g., RDAP/Wijois) to contextualize routing signals during incidents. The RDAP & WHOIS database connection is a concrete example of the type of data that can enrich post-incident analysis and capacity planning.

Conclusion

In multi-cloud networks, routing decisions matter as much as server-side performance. A deliberate blend of Anycast routing, BGP-based traffic engineering, and DNS-driven steering can dramatically reduce latency and bolster uptime, but it requires disciplined observation, testing, and governance. The best results come from starting with a clear latency budget, implementing a measured change program, and combining multiple routing primitives in a way that complements your cloud footprints and business goals. For practitioners seeking broader domain data and cost transparency, exploring resources like the List of domains in .com TLD and related pricing pages can provide practical context during planning.

Whether you are optimizing cloud routing in a hybrid or multi-cloud setting or evaluating a dedicated traffic engineering service, the core truth remains: the fastest path is the one you can observe, validate, and adapt in real time. CloudRoute's approach to overlay routing and live-path selection illustrates how modern networks can dynamically converge toward optimal paths without sacrificing control or governance.