Introduction: solving latency and uptime in a multi-cloud world

For SaaS, DevOps, and enterprise teams, routing decisions at the edge increasingly determine whether a user experiences a fast, reliable application or a balky, intermittently available one. In multi-cloud environments, traffic does not stay neatly within a single provider’s network. It hops across regions, clouds, and internet exchange points, amplifying latency and introducing points of failure. The challenge is not a single technology but a coordinated strategy that combines edge routing, BGP optimization, DNS-based traffic steering, and observability. When done well, your cloud routing posture can reduce latency, improve uptime, and make multi-cloud networks feel like a single, cohesive fabric.

Understanding the core concepts: how traffic gets steered across clouds

Two foundational ideas underpin modern cloud routing: anycast-based edge routing and BGP-driven interconnects. Anycast assigns the same IP address to endpoints in multiple locations, routers in the network determine the closest or best-performing path to that address. This is a central technique for directing user traffic to the nearest data center or POP, and it is a key driver of latency reductions in practice. Cloudflare, a prominent advocate of anycast, explains that traffic is routed to the nearest edge location via anycast IP addressing, a design that underpins many global DNS and CDN deployments. (developers.cloudflare.com)

Broadly, BGP-based routing - often paired with anycast - lets operators announce the same prefixes from multiple locations, enabling dynamic path selection as network conditions change. Cloudflare’s architecture and reference materials describe how BGP announcements and the anycast fabric work together to move traffic toward the closest, most reachable edge. This capability is especially valuable when you’re coordinating traffic across AWS, Google Cloud, and Azure footprints. (developers.cloudflare.com)

DNS as a companion to network routing

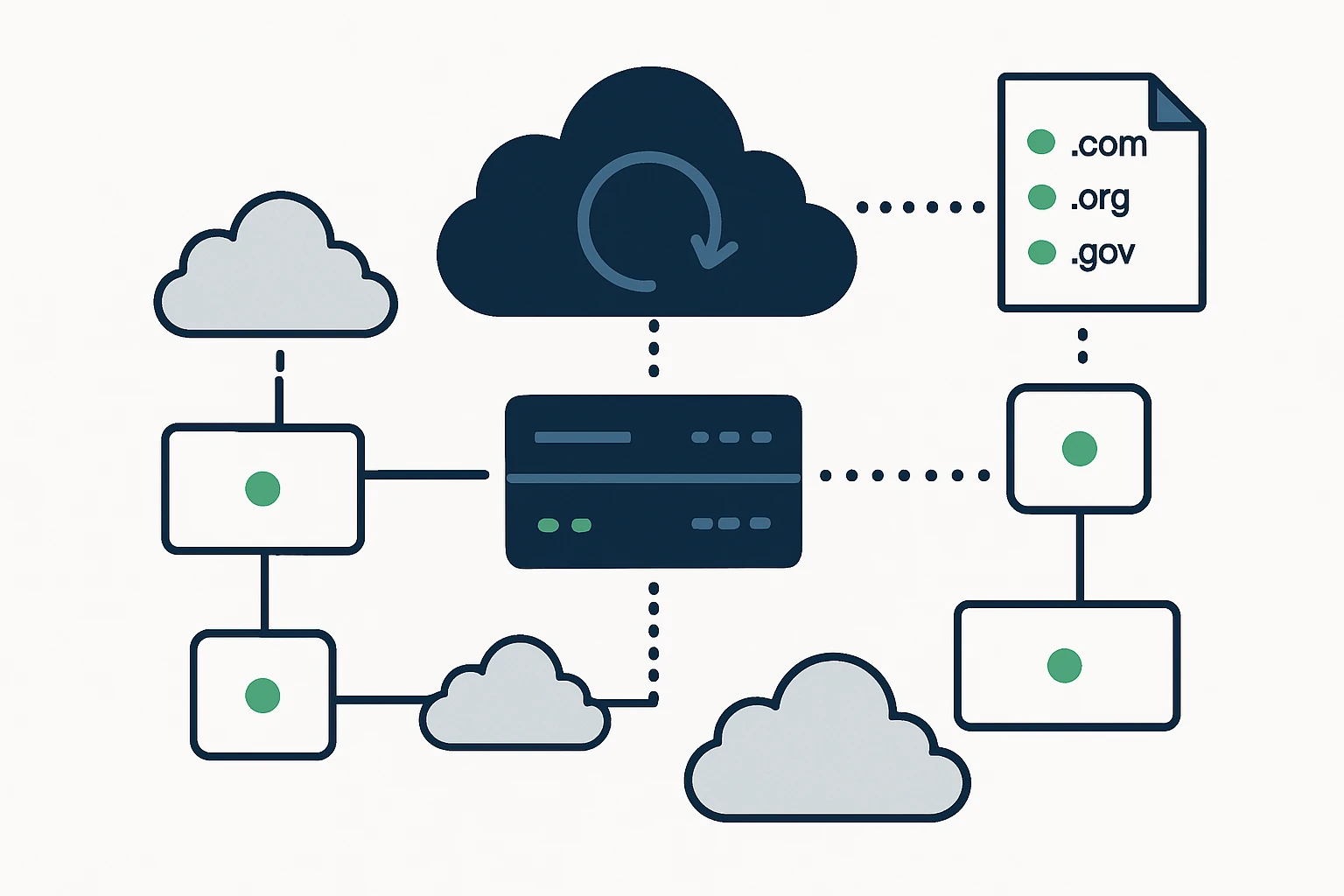

While BGP/anycast handles inter-site routing, DNS-based traffic steering provides another layer of control at the edge. Modern DNS services offer health checks and policy-based routing to shift user queries away from unhealthy or congested end points. AWS Route 53, for example, supports DNS failover and a range of routing policies (including health-check-based failover and traffic flow policies) to partition traffic across regions or clouds. This DNS-level control complements network routing, creating resilient end-user experiences even when a single region or cloud experiences issues. (docs.aws.amazon.com)

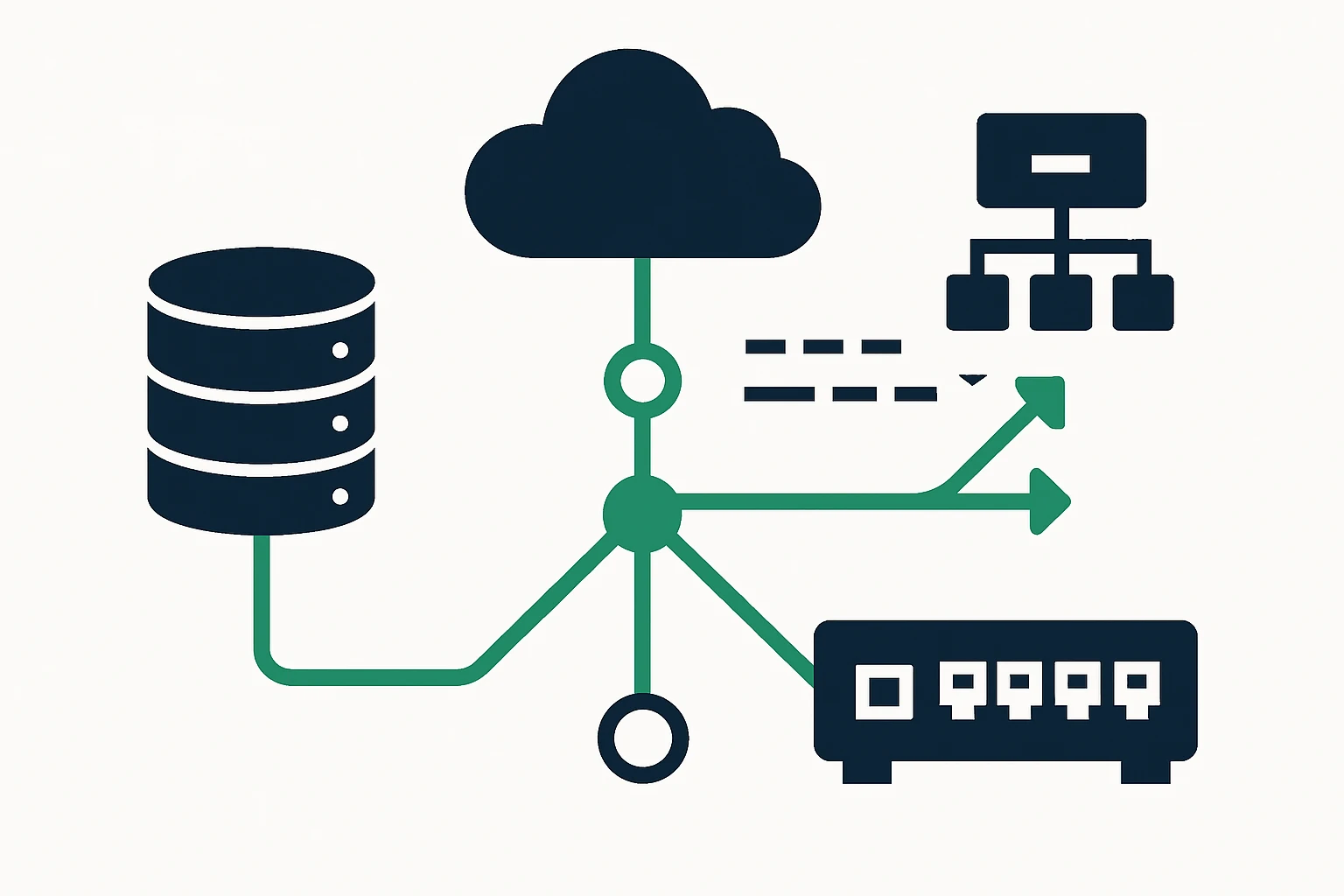

Rethinking multi-cloud networks: latency, cost, and control

Latency in multi-cloud setups is not solely a function of raw distance, it is a product of routing choices, peering relationships, and the behavior of global edge networks. In practice, teams optimize by combining proximity-aware routing (anycast/BGP) with intelligent DNS steering and carefully managed egress costs. Cloud providers and network vendors emphasize the need to design for high availability, near-edge routing, and predictable performance. Google Cloud, for example, highlights best practices for Cloud Router, including BGP-based failover readiness and high-availability links to improve reliability in dynamic environments. (docs.cloud.google.com)

In a multi-cloud context, cross-cloud traffic can incur egress charges and variable performance depending on the chosen path. A disciplined approach uses multiple levers - edge routing (anycast/BGP), DNS failover, regional traffic policies, and continuous telemetry - to steer traffic toward the best-performing endpoints while keeping operational costs in check. Cloudflare’s work on traffic flow and edge routing illustrates how the routing table and region-scoped policies influence where traffic lands, reinforcing the value of integrated, observable control across clouds. (developers.cloudflare.com)

DNS failover and global traffic management: when and how to use them

DNS failover is not a replacement for robust edge routing, it is a complementary mechanism that helps maintain uptime when a regional or cloud-scale failure occurs. AWS Route 53 documents how health checks observe endpoint health and how DNS failover can route traffic to healthy resources, with support for both simple and complex configurations (including weighted and alias records). The practical takeaway is to treat DNS failover as part of a broader strategy that includes proactive health monitoring and staged failovers, not as a one-off switch. (docs.aws.amazon.com)

Traffic Flow policies in Route 53 provide a visual, policy-driven way to shape DNS responses across global endpoints, helping teams implement geoproximity routing or latency-aware decisions as part of a broader global traffic management strategy. This demonstrates how DNS and network-layer routing can work in concert to reduce user-perceived latency and improve resilience. (aws.amazon.com)

A practical framework for cloud routing optimization

To structure this complex problem, adopt a four-pillar framework that aligns with real-world needs: Observe, Decide, Act, Verify. This framework keeps routing aligned with business goals (latency reduction, uptime, cost control) while remaining adaptable as cloud footprints evolve.

- Observe - Instrument global latency, path stability, and availability across clouds. Collect telemetry from end users, cloud peers, and edge POPs to map performance hotspots and failure modes. Use synthetic tests and real user metrics to understand where latency creeps in and which clouds are preferred for given regions.

- Decide - Choose the routing control levers that address the observed issues. Prioritize decisions that improve perceived latency and resilience, such as adjusting anycast routing to favor the most responsive PoPs, or tuning DNS failover policies to minimize user disruption during regional outages. The choice should balance latency gains with the risk of mid-connection state changes in long-lived connections, a known consideration with anycast-based routing. (blog.cloudflare.com)

- Act - Implement changes via a layered approach: (a) update edge routing announcements (BGP/anycast) to steer traffic to healthier regions, (b) adjust DNS failover settings (health checks, weighted records, and traffic-flow policies) to shift load when endpoints degrade, (c) validate egress paths and fees to control cost impact. Cloudflare’s architecture and Route 53’s health-check-driven failover illustrate how these controls operate in production. (developers.cloudflare.com)

- Verify - Monitor the impact of changes with end-to-end measurements and service-level indicators. Confirm latency improvements, error rate reductions, and cost impacts, and adjust as necessary. Use post-change telemetry to ensure that improvements are sustained and not just transient wins during testing.

Framework in practice: a quick reference block

- Observe: latency by region and cloud

- Decide: which routing lever to adjust (anycast, DNS, or both)

- Act: implement BGP announcements and DNS policies

- Verify: monitor end-user performance and cost

Limitations and common mistakes: what to watch out for

Even well-designed routing strategies have constraints. First, DNS-based failover relies on caching and TTLs, overly aggressive TTLs can backfire by causing flaps, while overly long TTLs delay failover. AWS Route 53 documentation emphasizes that health checks drive responses, but the actual DNS response can be cached by resolvers, planning TTLs and cache behavior is essential. (docs.aws.amazon.com)

Second, mid-connection rehoming remains a risk with some anycast/BGP configurations. Long-lived TCP connections may assume state at a single endpoint, which can complicate rapid failover or path changes. This is a known nuance in anycast routing and is a reason to pair edge routing with DNS-based decisions and careful session management. (blog.cloudflare.com)

Third, egress costs and cross-cloud data movement can erode the cost benefits of multi-cloud architectures if not managed. Modern networks favor proactive planning around peering, direct interconnects, and traffic engineering to minimize expensive cross-cloud transfers. Industry analyses and vendor guidance point to these concerns as central to sustaining both performance and cost efficiency. (megaport.com)

Putting domain data to work in a resilient DNS and routing strategy

Domain datasets across TLDs can support resilience testing and global traffic management planning. In practice, teams may catalog or reference domain inventories by TLD to simulate failover scenarios, measure resolution times from diverse geographies, and validate that DNS and edge routing behave as expected under load. For organizations looking to explore domain datasets as part of a broader domain strategy, WebAtla’s offerings provide listings by TLD and country, which can be useful for testing and benchmarking in a multi-cloud context. For example, you can explore the main arTLD page and related domains to understand global dispersion of endpoints and registrars: List of domains in the .ar TLD. You can also browse the broader catalog of domains by TLD: List of domains by TLD, and review pricing for access: Pricing.

Incorporating these datasets into a testing and validation workflow helps ensure DNS failover and edge routing decisions are grounded in real-world domain geography and registrar distributions. While this article focuses on routing strategies, the ability to reference diverse domain inventories can support more robust geo-aware testing and traffic-shaping scenarios.

Expert insight

In practice, experienced network architects emphasize that latency-aware routing is not a single knob to turn but a product and a workflow. An expert perspective echoes that anycast and BGP provide robust, scalable routing foundations, but effective latency reduction also requires telemetry, policy discipline, and careful consideration of the trade-offs between speed, stability, and cost. This aligns with industry guidance on edge routing and DNS-based traffic steering, which stress combinations of fast-path routing with observability to deliver durable improvements. (blog.cloudflare.com)

Real-world integration: how CloudRoute and WebAtla complement each other

CloudRoute helps teams design and operate advanced cloud routing and traffic engineering to reduce latency and optimize multi-cloud performance. The approach is to align the technical controls (edge routing, BGP optimization, and DNS failover) with practical datasets and testing workflows. WebAtla’s domain catalogs by TLD can support resilience testing and geo-aware validation, giving practitioners a way to model and test failover scenarios across diverse geographies and registrars. The integration is editorially natural: use domain data to stress-test DNS failover policies, validate edge routing choices, and confirm end-user performance across the globe.

See the client resources for domain datasets and pricing: List of domains in the .ar TLD, List of domains by TLD, and Pricing.

Conclusion: a practical path to faster, more reliable multi-cloud networks

Cloud routing optimization sits at the intersection of edge routing, DNS intelligence, and cross-cloud connectivity. By combining anycast/BGP-based routing with DNS failover policies, teams can dramatically improve latency and uptime across AWS, GCP, and Azure footprints. The four-part framework - Observe, Decide, Act, Verify - provides a disciplined approach to optimizing routing decisions while maintaining guardrails around mid-connection state changes and cost. As multi-cloud architectures continue to evolve, the value of integrated visibility and layered control will only grow, helping organizations deliver consistently fast and reliable user experiences.