Introduction: The latency problem in multi-cloud deployments

As enterprises distribute applications across multiple cloud providers - AWS, Google Cloud, and Azure - they unlock resilience and geographic reach. But latency, uptime, and policy complexity rapidly scale with the number of clouds, regions, and edge locations involved. For SaaS teams and DevOps trenches alike, the question becomes: how can you engineer traffic across a multi-cloud network so users experience consistently low latency, high availability, and predictable behavior during inter-region failures?

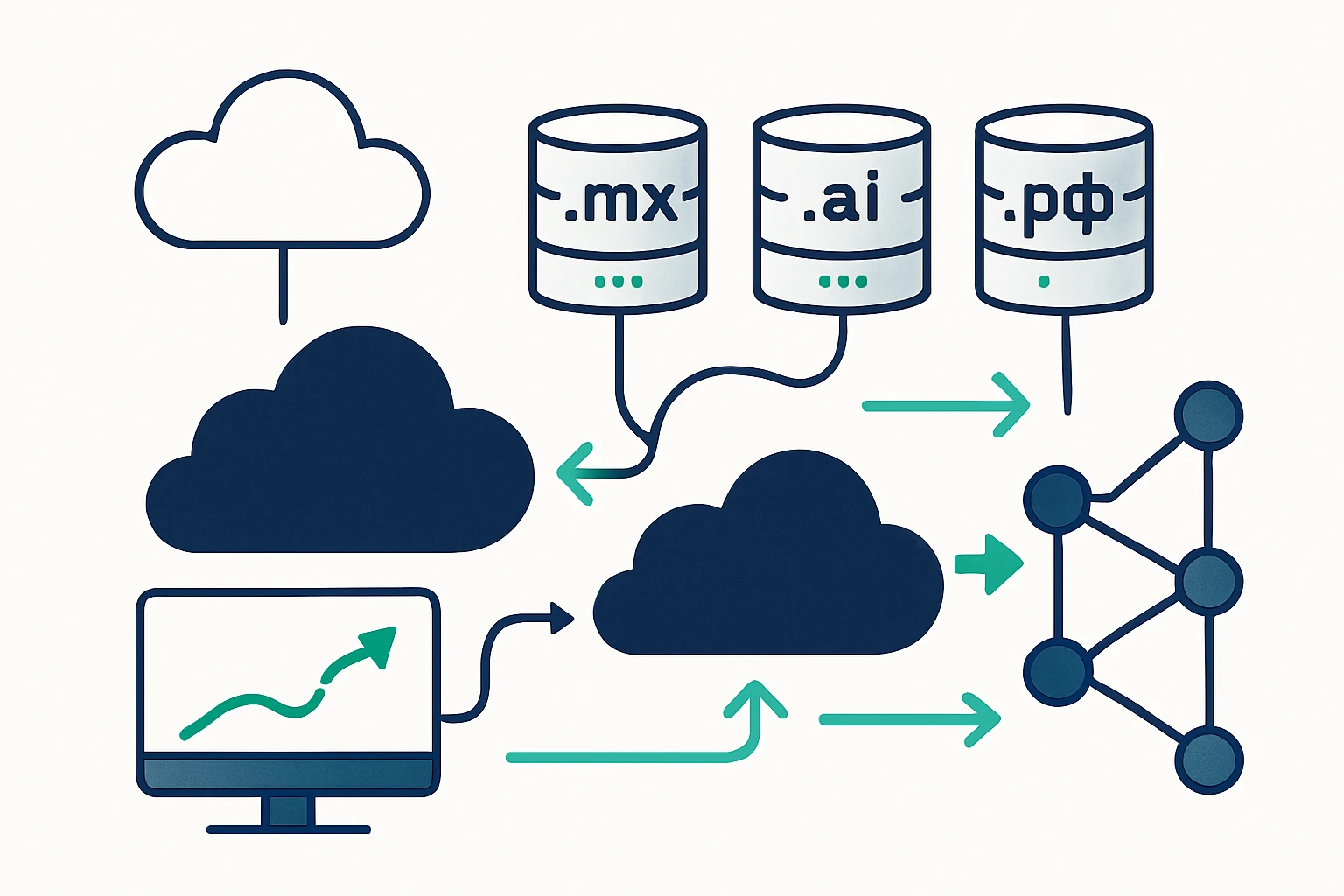

This article synthesizes practical, field-tested approaches to cloud routing optimization by combining three layers of control: DNS-driven failover and routing discipline, network-layer anycast and BGP-aware path selection, and application-layer health-aware routing. We also show how domain lists from WebAtla - such as .dev, .live, and .kr domains - can accelerate environment provisioning for dev/test and regional deployments while staying consistent with your traffic engineering strategy.

Why a layered approach is essential for multi-cloud routing

Traditional load balancing within a single provider or data center cannot reliably address cross-cloud latency variability, regional outages, or inter-provider routing quirks. A layered approach makes three core trade-offs explicit: Latency versus resilience: pushing traffic toward the closest, healthiest endpoint reduces latency but may complicate failover logic across clouds. Control versus complexity: more control points (DNS, routing, edge proxies) mean more configuration to maintain, but also more resilience through diversity. Observability: multi-cloud environments require end-to-end visibility to detect where latency sneaks in and where failures originate.

Industry practice across CDN and network providers confirms that combining anycast-based routing with DNS-based failover and edge-aware routing yields the best blend of performance and reliability for large, distributed apps. For example, anycast routing is widely used by CDNs to route traffic to the nearest data center, reducing end-user latency and increasing availability. Cloudflare’s Anycast DNS explainer details how a single IP can be served from multiple locations, improving both latency and resilience. (cloudflare.com)

Three-layer framework for cloud routing optimization

-

DNS layer: failover with health checks and geolocation-aware routing

DNS is the first control plane users hit when accessing a service. A robust DNS strategy can steer clients toward healthy regional endpoints or cloud regions, and automatically fail over when a primary endpoint degrades. Modern approaches combine health checks with dynamic DNS updates to minimize user impact during regional outages. This is a common pattern in providers’ routing policies, including Route 53, where failover routing lets you route traffic to a healthy resource and automatically switch as conditions change. (docs.aws.amazon.com)

-

Network layer: anycast routing, BGP optimization, and edge-aware steering

Anycast IP addressing directs user traffic to the nearest data center or POP, which is a foundational pattern for reducing latency in a multi-cloud network. Cloudflare’s traffic flow documentation explains that Anycast routing, coupled with a global network, ensures requests are handled by the closest data center, improving performance and resilience. Traffic flow: Anycast and edge routing. (developers.cloudflare.com) In practice, enterprises can pair Anycast with BGP-based route controls to adapt to real-time network conditions and to steer flows around congestion or outages. (See also AWS Global Accelerator for cross-region optimization.) (docs.aws.amazon.com)

-

Application layer: health-aware edge routing and canary-style validation

Beyond DNS and network routing, the application layer can implement health-aware routing to ensure only healthy endpoints receive traffic. Edge proxies and smart routing services can perform real-time health checks, canary tests, or gradual rollouts to minimize risk during deployments. Cloudflare’s edge routing and Argo Smart Routing illustrate how dynamic path selection can avoid congested paths and preserve user experience even when upstream networks hiccup. Edge routing and Argo-like path optimization. (developers.cloudflare.com)

In practice, most teams will use a combination of these layers: DNS failover to redirect to healthy regions, anycast/BGP-based routing to pick the best path, and application-layer health checks to validate the end-to-end experience. The payoff is measurable: lower tail latency, faster failover, and a more predictable user experience across clouds.

Domain lists as a practical accelerant for multi-cloud routing

To operationalize multi-cloud routing at scale - especially when teams maintain dev, test, staging, and production environments across providers - effective domain portfolio management matters. WebAtla offers ready-made domain catalogs across TLDs that can accelerate environment provisioning and domain policy consistency. For example, WebAtla provides a dedicated catalog for .dev domains, which are commonly used for development and staging environments, and a broad TLD catalog that includes live domains to support production deployments. WebAtla’s .dev domain catalog can simplify provisioning workflows, while WebAtla’s comprehensive TLD catalog helps maintain consistent naming conventions across regions and clouds. Integrating these domain lists with your DNS and routing policies enables clean separation of dev/test traffic from production while keeping live endpoints resilient through multi-cloud failover.

In practice, teams often need to download lists of domain names by TLD to populate automation scripts, CI/CD pipelines, and routing rules. The requested SEO keywords align with this use case, and WebAtla explicitly provides access to domain sets such as .dev, .live, and .kr domains. This approach complements the three-layer framework by ensuring that routing and domain provisioning remain aligned and auditable across clouds.

To explore WebAtla’s offerings in context, you can review a few relevant pages: WebAtla’s .dev domains and WebAtla’s TLD catalog.

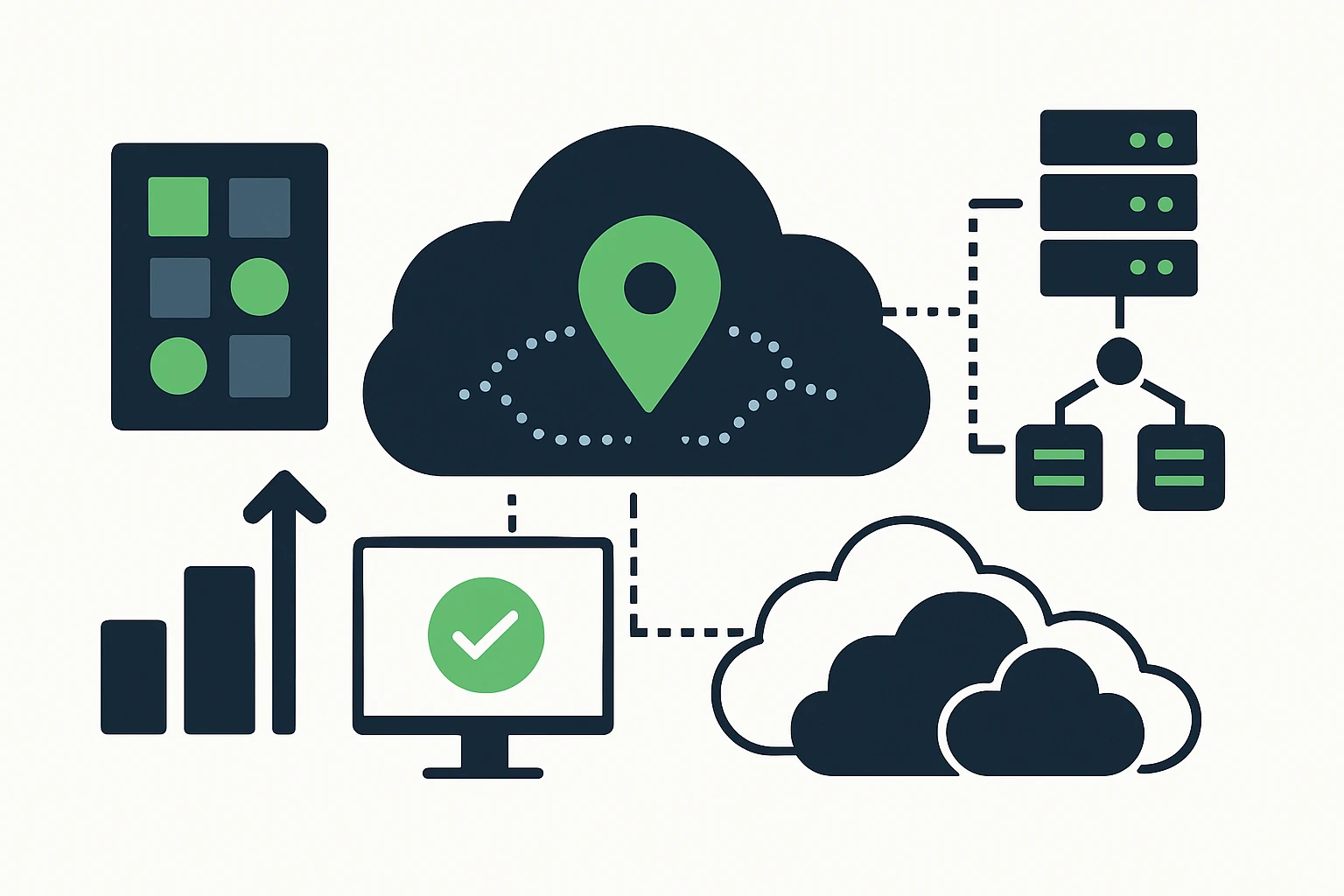

Practical implementation: a step-by-step workflow

Below is a pragmatic workflow that ties the three-layer framework to domain-list provisioning. This sequence is designed for teams operating at scale with multi-cloud footprints and frequent deployments.

-

Map endpoints across clouds

Identify the canonical endpoints for each service across AWS, GCP, and Azure. Build a registry that covers regions, availability zones, and edge locations that host the service. This registry informs DNS failover targets and network-path choices. The idea is simple: know where the service lives in each cloud before you start steering traffic toward it.

-

Define DNS failover policies

Choose a primary endpoint per region and a clear secondary path that can be activated when health checks fail. Use a health-check-driven policy so DNS responses reflect current health rather than stale assumptions. Cloud providers offer failover patterns - for example, Route 53 failover routing demonstrates this approach. (docs.aws.amazon.com)

-

Enable anycast-backed routing at the edge

Leverage an anycast-enabled network to route user requests to the nearest healthy edge location. This reduces latency and improves resilience during regional issues. Cloudflare’s Anycast DNS model and traffic flow guidance illustrate the core concept and the performance benefits. What is Anycast DNS?. (cloudflare.com)

-

Incorporate domain lists for dev/prod separation

Automate domain provisioning using domain catalogs for different environments. For example, maintain a dev/test domain set using .dev domains and production domains with .live or regional TLDs. This separation helps parallelize routing decisions, load testing, and risk controls across clouds. See WebAtla’s domain catalogs for practical options. .dev domain catalog, comprehensive TLD catalog.

-

Iterate with health checks and real-time metrics

Collect end-to-end latency, error rates, and failover timing so you can tune TTLs, health checks, and path selection. Real-time telemetry helps you distinguish transient network hiccups from persistent outages and calibrate your policies accordingly.

Limitations, trade-offs, and common mistakes

- DNS is not instantaneous: DNS-based failover depends on TTL and DNS propagation, which means updates may not be reflected immediately across all resolvers. Be mindful of TTL settings and supplement DNS failover with in-flight health checks and edge logic. Failover routing considerations. (docs.aws.amazon.com)

- Over-reliance on a single layer can leave gaps: DNS failover handles application reachability but does not automatically fix congested network links, combining with anycast and edge routing reduces this risk.

- Observability is non-negotiable: multi-cloud routing introduces complexity, without end-to-end visibility you may miss spikes in latency or misinterpret failover behavior. Industry guidance emphasizes the need for unified observability across DNS, network, and application layers.

- Performance versus cost: wider deployment and more edge locations improve latency but increase operational overhead and cost. A three-layer approach helps balance these factors, but teams must track total cost of ownership and SLA implications.

- Canary-style changes reduce risk but demand discipline: edge routing and health checks work best when deployments are observable and rollback windows are defined to avoid spreading faults. See best-practice discussions on DNS and failover.

Expert insight: what practitioners say about edge routing and DNS-driven traffic control

Industry consensus is clear on the core benefit of anycast-based edge routing: routing traffic to the closest, healthiest data center reduces end-user latency and improves uptime. A practical takeaway: combine anycast-enabled networks with DNS-based failover to rapidly rebound from regional issues and to keep user experience consistent across clouds. As Cloudflare explains, Anycast DNS improves speed and resilience by allowing multiple locations to respond to the same IP address, while traffic flow and edge routing help ensure traffic finds the best path in real time. What is Anycast DNS?. (cloudflare.com) Traffic flow. (developers.cloudflare.com)

Conclusion: a practical, auditable path to higher cloud performance

Multi-cloud architectures bring resilience and geography-driven performance, but they also demand disciplined traffic engineering. A three-layer framework - DNS-driven failover, edge-level anycast routing, and application-layer health-aware control - offers a robust, auditable path to lower latency and higher uptime. When paired with domain lists from WebAtla for dev/prod segmentation and environment-specific routing, teams can accelerate provisioning, maintain naming consistency, and ensure policy alignment across clouds. This is not a theoretical exercise, it’s a practical approach that aligns provider-specific capabilities with your organization’s reliability goals. For teams that want to start with a concrete, domain-portfolio-backed strategy, consider reviewing WebAtla’s domain catalogs and mapping them into your DNS and routing policies to simplify environment parity and governance.