When your SaaS platform serves users across continents, the difference between a snappy experience and a sluggish one is often a matter of routing. Traditional, static networking can leave critical paths congested or misrouted, inflating latency and increasing the risk of regional outages. For modern enterprises deploying multi-cloud architectures on AWS, Google Cloud, and Azure, you need a latency‑aware approach to traffic routing that combines DNS intelligence, BGP awareness, and edge routing capabilities. This article offers a practical, non-promotional framework for engineers and decision‑makers who want to improve cloud network performance without overhauling their entire architecture.

Why latency matters in a multi‑cloud world

Latency is not just a number on a dashboard, it directly affects user perception, conversion rates, and service-level reliability. In multi‑cloud environments, the path a user’s request takes can vary dramatically depending on geography, peering, and regional health of each cloud region. Latency‑based routing, when implemented correctly, sends users to the nearest healthy region or edge node, reducing round‑trips and accelerating critical API calls. Cloud providers themselves offer latency‑aware routing options within their DNS or traffic management services, illustrating a broader industry shift toward latency‑conscious design. For example, leading cloud platforms document how latency‑based routing can route end users to the region with the lowest measured latency, often in combination with health checks and failover capabilities. (docs.aws.amazon.com)

A practical framework for latency‑aware traffic engineering

Below is a four‑phase framework you can adapt to your organization’s needs. It emphasizes observable data, clear decision logic, actionable controls, and ongoing verification - without requiring a single vendor lock‑in.

1) Observe: measure, monitor, and model latency in real time

- Collect end‑to‑end latency metrics from the user to each cloud region or edge location that your service relies on. This includes geo‑based measurements as well as active probing from synthetic monitors and real user telemetry.

- Track regional health signals (uptime, error rates) and CDN/edge performance to identify when a region becomes suboptimal to route through.

- In multi‑cloud contexts, gather cross‑cloud latency data (e.g., time to provision or to respond from AWS, GCP, and Azure endpoints) to build a holistic view of latency surfaces. See industry guidance on latency‑aware routing and automated path computation for large, multi‑domain networks. (juniper.net)

2) Decide: define routing policies that optimize for latency and reliability

- Latency‑based routing (LBR) via DNS directs users to the region or edge with the lowest latency. This is commonly used in public DNS services and is often complemented by regional health checks to avoid routing to degraded endpoints. Examples and official guidance from major providers illustrate how this policy operates in practice. (docs.aws.amazon.com)

- Geographic routing and proximity routing can steer traffic to the nearest data center or cloud region, balancing latency with regional capacity and cost considerations.

- Edge and anycast routing can improve perceived latency by letting the same IP address be answered by multiple edge locations. This reduces the distance data must travel and can shrink RTTs when routing is optimal. Industry analyses and vendor whitepapers describe how anycast routing achieves these gains. (umbrella.cisco.com)

3) Act: implement controls that can be tuned in real time

- DNS‑level routing: Use latency‑aware DNS records and health checks to shift user traffic away from unhealthy or congested regions. AWS Route 53’s latency routing is a widely used example of this approach. (docs.aws.amazon.com)

- BGP and anycast: In a multi‑cloud environment, BGP policy can influence path selection, often based on political or economic criteria as well as topology. It’s important to note that BGP typically optimizes for reachability and reliability, not necessarily the lowest latency, so you’ll want to pair BGP strategies with latency‑aware decision logic. For a broader view on how BGP routing decisions interact with network latency, see industry‑standard references and practitioner guides. (cisco.com)

- Edge orchestration and health checks: Implement real‑time health monitoring of services across clouds and broadcast alerts when a region becomes unhealthy. This allows automatic rerouting and faster failover. Cloud providers emphasize health‑aware routing and failover capabilities as part of resilient architectures. (aws.amazon.com)

4) Verify: continuously validate performance and adjust as needed

- Establish a feedback loop that compares observed latency to target SLAs, updating routing policies as regions drift in performance. Periodic post‑mortems after incidents help identify gaps between planned routing behavior and real‑world outcomes.

- Run regular game days or chaos experiments to test failover scenarios across clouds and regions, ensuring that latency and uptime objectives hold under realistic conditions. DNS failover planning benefits from proactive testing and TTL tuning to balance responsiveness with query load. (usavps.com)

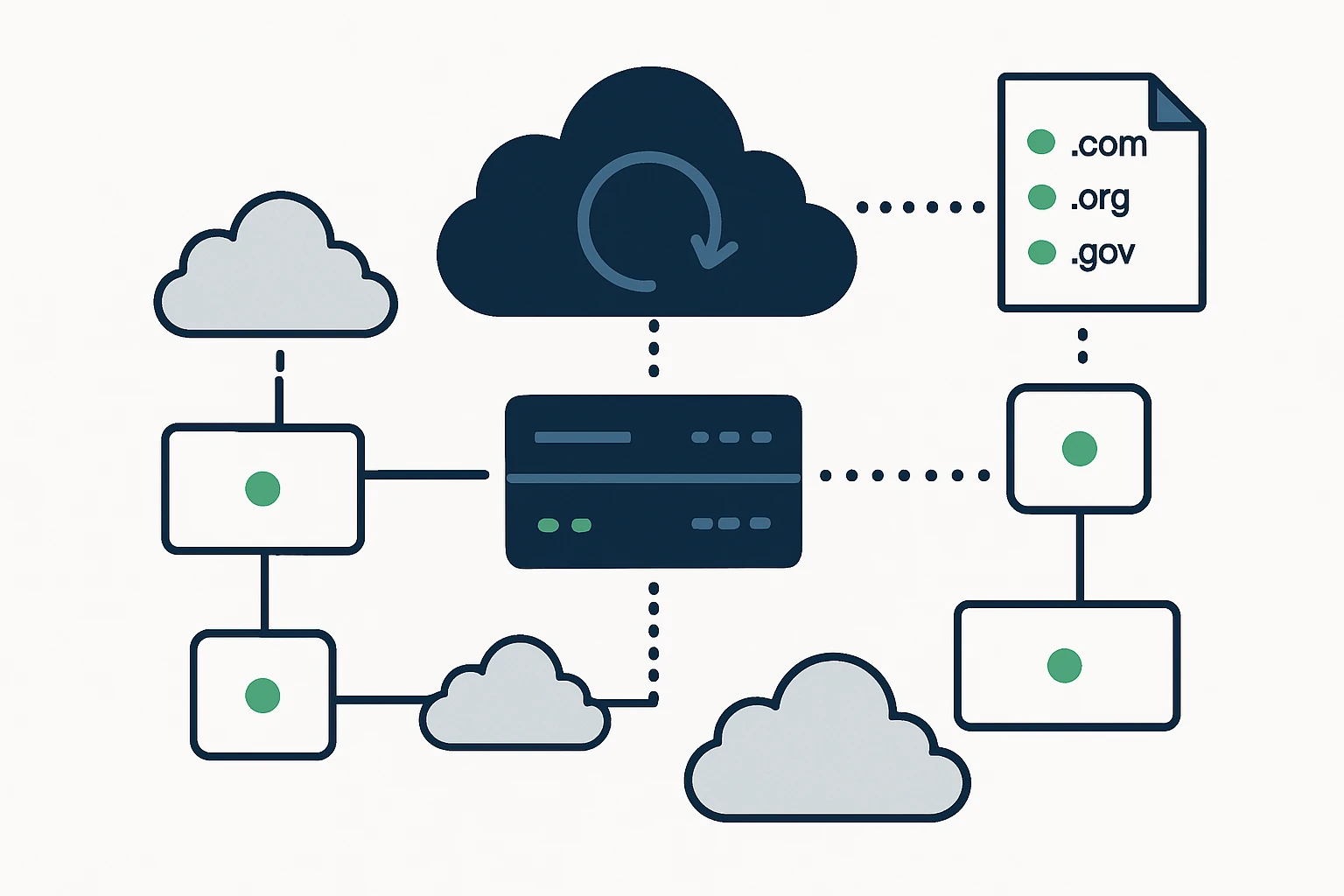

The TE decision matrix: DNS, BGP, and anycast - how they fit together

To avoid vendor lock‑in and to balance performance with complexity, most mature multi‑cloud networks blend multiple routing techniques. The matrix below summarizes typical use cases, benefits, and trade‑offs. The ideas here are grounded in established practice documented by cloud providers and networking vendors.

- DNS‑based latency routing: Pros – simple to deploy, works across clouds, integrates with health checks, Cons – TTLs introduce propagation delay, may be tricked by DNS cache and regional DNS outages. Best for gradual traffic shifts and regional optimization.

- Anycast routing: Pros – can reduce end‑to‑end latency by steering clients to the closest POP, Cons – complexity in debugging and traffic engineering, potential routing divergence across providers. Useful at the edge and for CDN‑like layers.

- BGP optimization: Pros – direct control over routing at the network edge, Cons – decisions are influenced by ISP policies and may not reflect real‑time latency, often requires coordination with carriers and on‑prem networks.

When used in combination, you can achieve a resilient, latency‑aware multi‑cloud network. For example, you might route users with latency‑based DNS to the nearest healthy region, while using BGP policies to optimize upstream paths where feasible, and deploying anycast at the edge to shorten the final hop. Studies and practitioner resources across the industry corroborate the value of anycast for reducing latency and improving resilience in distributed networks. (blog.apnic.net)

DNS failover strategies and latency: what to know

DNS failover is a critical piece of the latency and reliability puzzle, but it comes with caveats. DNS‑level failover can offer rapid regional redirection when endpoints fail, but propagation and DNS caching can delay cutover. A well‑designed strategy combines fast health checks, low TTL values for critical records, and in‑DNS traffic steering to minimize user impact. Industry guides and practitioner articles emphasize aligning failover mechanisms with realistic service levels, rehearsing failover scenarios, and balancing load with TTL tuning. (usavps.com)

Limitations and common mistakes in latency‑aware TE (a quick reality check)

- Relying on a single control plane: A sole focus on DNS‑based routing can lead to stale decisions if health signals aren’t timely or comprehensive. Combine DNS with real‑time telemetry and, where possible, cross‑cloud health checks.

- Ignoring BGP dynamics: BGP is powerful, but it doesn’t inherently minimize latency. If latency is your KPI, you must couple BGP with latency‑aware decision logic and edge routing strategies. This hybrid approach is echoed by routing practice papers and vendor guidance. (cisco.com)

- Over‑TTLing critical records: Long TTLs reduce DNS query load but slow failover when regional health degrades. Shorter TTLs improve responsiveness but increase query traffic, tune based on traffic patterns and SLA requirements. (usavps.com)

- Underestimating the edge: Latency improvements at the edge depend on the geographic distribution of your users and the density of edge locations. If your edge footprint doesn’t match user geography, latency benefits may be muted.

- Under‑documenting failure modes: A failure in one cloud should not cascade, document 1–2 clear failover paths and validate them under load to avoid ad‑hoc routing decisions during incidents.

A structured block you can reuse: TE Decision Framework

- Observation metrics - latency to each region, regional health, edge load, DNS cache effectiveness.

- Policy design - latency‑aware DNS, geolocation, and proximity routing with health checks, cross‑cloud BGP considerations.

- Action controls - DNS TTL tuning, latency routing rules, edge routing deployments, and, where applicable, BGP policy changes.

- Verification cadence - SLA comparisons, post‑mortems, and periodic game days to stress test failover.

Real‑world context: where cloud routing improves SaaS performance

Latency‑aware traffic engineering is not a theoretical exercise, it maps to concrete outcomes like faster API responses, smoother user experiences, and higher uptime in multi‑cloud contexts. Industry practice shows how latency‑based routing, when paired with health checks and edge routing, can lead to measurable reductions in user‑perceived latency and improved resilience during regional outages. Industry references from major cloud providers and routing practitioners describe these patterns and their benefits in terms of set‑based routing, health‑check integration, and edge processing. (docs.aws.amazon.com)

Integrating the client: domain data as part of the routing research toolkit

In-depth routing research often benefits from rich, diverse data sources about internet topology and domain holdings. The client offers a portfolio of domain data pages that illustrate how internet infrastructure maps to regional routing considerations. For readers who want to explore domain lists by TLD as a resource in network research or threat intelligence, the following pages are useful anchors: download list of .digital domains, download list of .art domains, download list of .tw domains. These data sources can complement latency modeling by providing visibility into global domain distribution and DNS ecosystems.

Conclusion: a disciplined, adaptable approach to cloud routing

Latency and uptime across a multi‑cloud footprint require more than one tool or one provider. The most resilient architectures blend DNS‑level latency awareness, cross‑cloud routing insights, and edge performance to steer users toward the healthiest, nearest endpoints in real time. By observing real‑world performance, codifying decisions into clear routing policies, acting with flexible controls, and verifying outcomes continuously, teams can reduce latency, improve reliability, and better meet customer expectations. And as the internet’s routing fabric evolves - with anycast at the edge, smarter DNS, and more intelligent inter‑cloud routing - the opportunity to optimize becomes broader, not narrower.

For organizations exploring advanced DNS and traffic engineering capabilities, reference materials from cloud providers and network vendors offer concrete, field‑tested guidance to inform your design. And if your research touches on public data about global domain distribution, the client’s domain lists across TLDs can provide a practical data source to enrich your analysis.