Introduction: solving latency in a multi-cloud world

For modern SaaS platforms and enterprise workloads, latency is more than a comfort metric - it’s a differentiator. As teams distribute workloads across AWS, Google Cloud Platform, and Microsoft Azure, the network path from users to services becomes a mosaic of regional peering, cloud-native routing, and edge infrastructure. The question isn’t whether you should adopt multi-cloud networking, it’s how you orchestrate routing and traffic engineering to minimize latency, maximize uptime, and preserve a consistent user experience across geographies. This article distills practical approaches - grounded in the realities of cross-cloud traffic, edge delivery, and DNS-based resilience - that cloud operations, DevOps, and network teams can adopt today. It also offers a structured framework you can tailor to your architecture and budget, without lock-in to a single vendor.

Across the industry, successful latency reductions come from a combination of edge-aware routing, smarter control plane design, and observability that closes the feedback loop between measurement and action. As you read, you’ll see how core concepts like Anycast routing, BGP optimization, and DNS failover interact with multi-cloud architectures to deliver measurable improvements in response times and availability. For practitioners looking to ground theory in practice, there’s also a concrete framework you can apply to governance, instrumentation, and change management.

Section 1: Core levers for latency reduction in multi-cloud deployments

1. Anycast routing to bring users closer to the service edge

Anycast routing directs a user’s request to the closest or best-performing copy of a service, using the same IP address across multiple data-center locations. This approach reduces propagation delay and often improves failover performance because the network can steer traffic to the nearest healthy endpoint. In practice, Anycast is a cornerstone of modern CDNs and cloud routing strategies, enabling low-latency delivery even as origin services move or scale across regions.

Key takeaway: Treat Anycast as a routing primitive that you combine with regional edge deployments to keep latency low for end users, while maintaining control over health-based redirection. What is Anycast DNS? Cloudflare explains how an identical IP address can be announced from multiple locations to shorten the distance to users. (cloudflare.com)

2. BGP optimization and deliberate route control across clouds

Border Gateway Protocol (BGP) remains the lingua franca of inter-domain routing. In multi-cloud contexts, you can optimize BGP by tuning path selection, peering choices, and routing policies to reduce the number of hops between users and services and to hasten failover when regions or clouds become unavailable. Practical BGP optimization involves careful control of route advertisements, timely withdrawals of failing paths, and compatibility with cloud-native services that can augment routing decisions with health and performance signals.

Best-practice guidance from cloud networking professionals emphasizes the need for robust route management and graceful failover to minimize disruption during network events. For teams implementing BGP-aware strategies, concrete guidelines exist around router health monitoring, graceful restart, and integration with cloud load balancers and regional services. Best practices for Cloud Router (Google Cloud) highlights how BGP sessions and graceful restart help maintain stability during topology changes. (cloud.google.com)

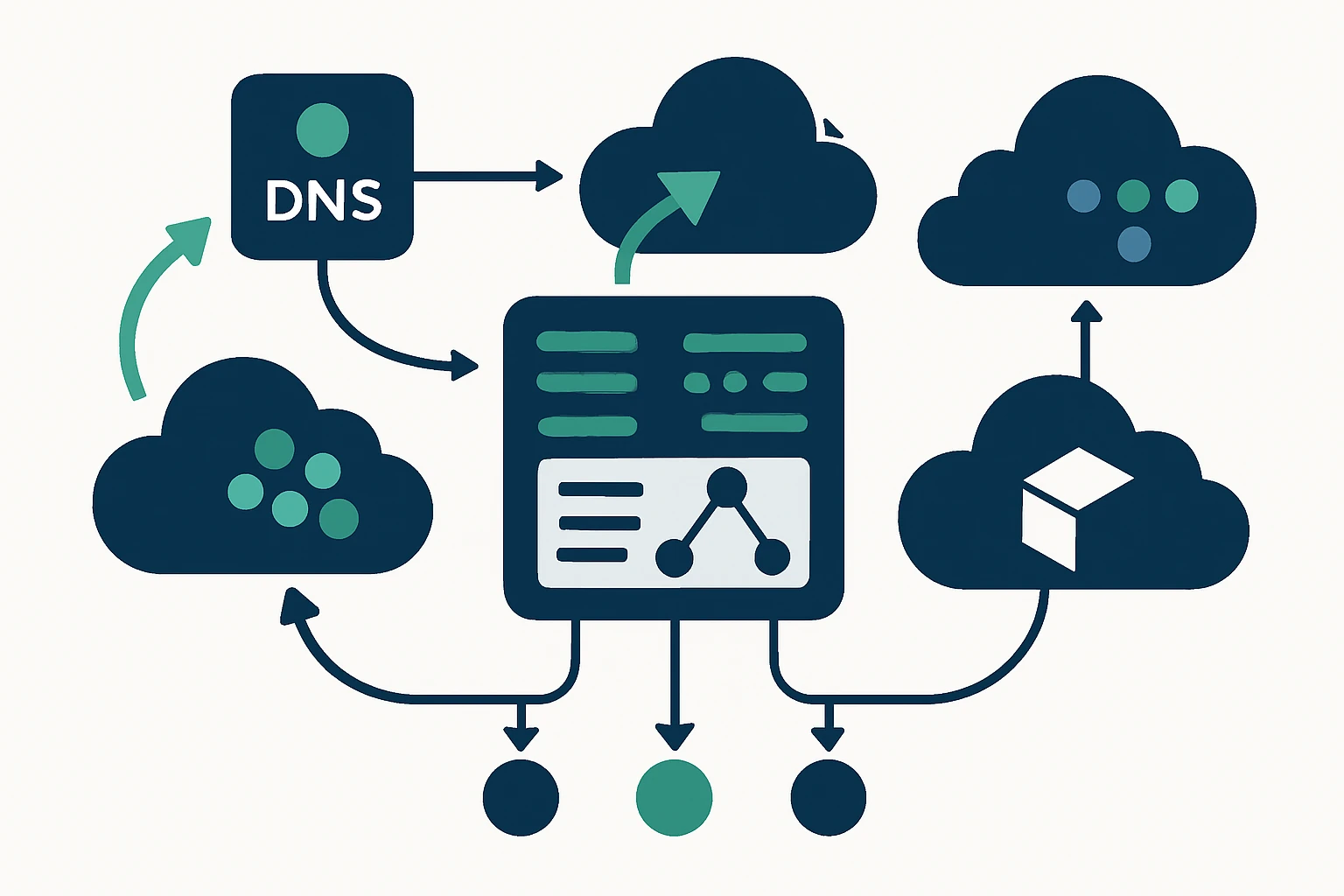

3. DNS-based traffic steering and failover for resilience

DNS-based traffic management enables policy-driven routing decisions at the edge of the Internet. When paired with health checks and multi-region deployments, DNS failover can shift traffic away from unhealthy regions or clouds, preserving overall service availability and latency performance. Route 53’s failover capabilities, in particular, illustrate how DNS can support active-active or active-passive architectures across regions with health-driven decisions, complementing load balancers and edge caches.

Organizations commonly combine DNS failover with latency- or geolocation-based routing policies to achieve both resilience and performance. For practitioners, understanding the interplay between DNS failover, health checks, and cloud-native load balancers is essential for a cohesive global strategy. See AWS guidance on DNS failover configuration in Route 53 for an authoritative reference on how to implement these patterns. (docs.aws.amazon.com)

Section 2: Observability as the backbone of traffic engineering

Latency is a moving target. The only way to consistently beat it is to measure it across the entire path - from the user’s device, through recursive resolvers, to the edge, and through the service’s regional backends. Observability must be continuous, not episodic, and it should influence decisions in near real time. Modern traffic engineering relies on a feedback loop: observe, decide, and act, with changes validated against performance signals and reliability metrics.

One practical approach is to adopt a global view of traffic flow that aggregates DNS responses, edge latency, and cloud network telemetry. This kind of visibility supports proactive routing decisions and reduces the risk of stale policies driving users into suboptimal paths. For reference, Cloudflare’s traffic-flow documentation outlines how Anycast and edge routing work together to minimize latency and improve reliability. Traffic flow at Cloudflare provides a canonical model of edge-aware routing. (developers.cloudflare.com)

Section 3: A practical framework for latency-focused traffic engineering

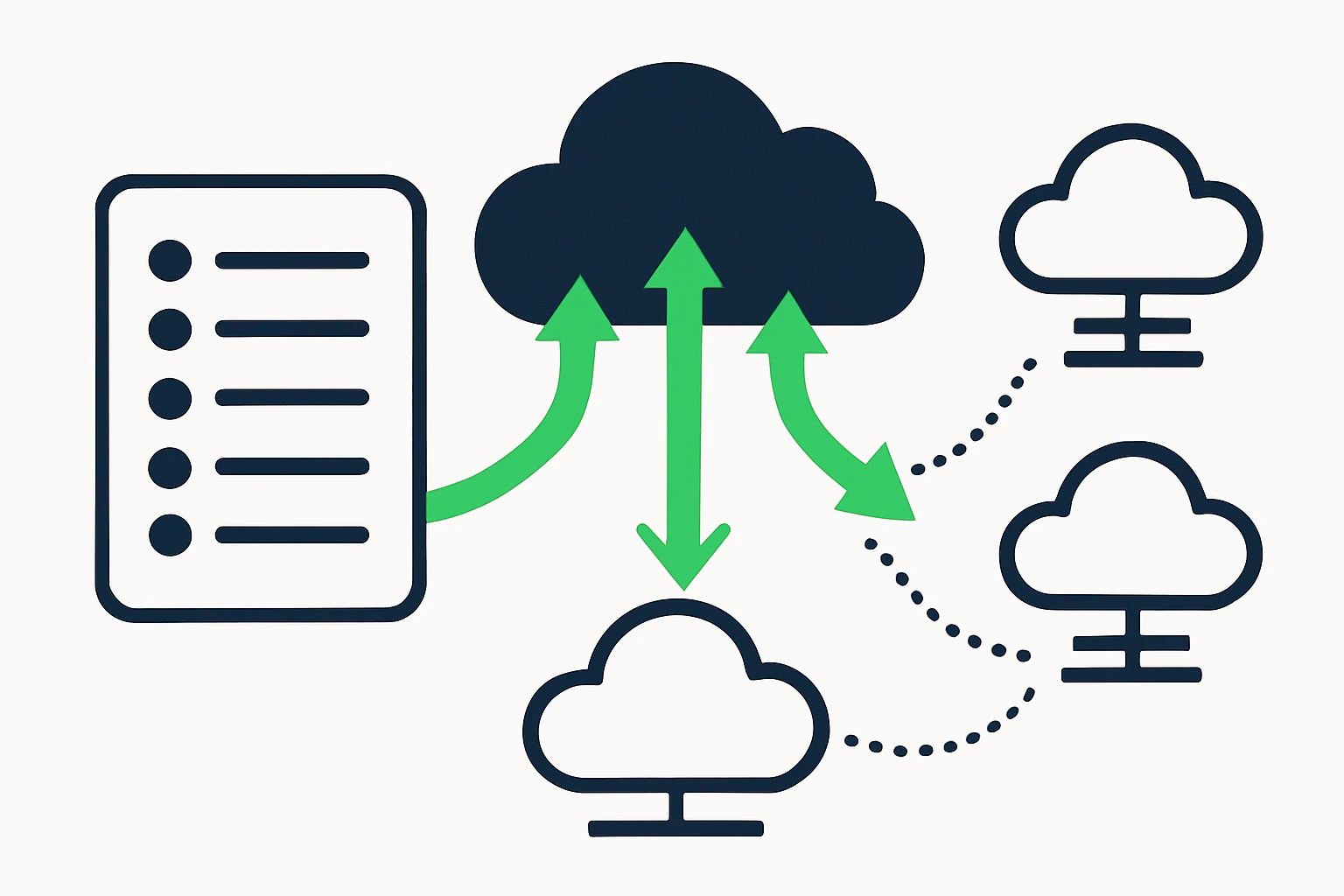

Below is a compact framework you can adopt to structure your multi-cloud routing program. It emphasizes measurement-driven decisions, modular policy design, and disciplined validation. The LOF framework - Latency-Optimization Framework - comprises four stages: Measure, Decide, Act, Validate.

| Stage | What to do | Key signals |

|---|---|---|

| Measure | Collect end-to-end latency, availability, and path health across clouds and edges | Active latency metrics, TTL-based resolver times, health checks, packet loss rates |

| Decide | Define routing policies that minimize latency while respecting cost and risk | Latency targets, regional SLAs, cost envelopes |

| Act | Apply BGP route changes, update Anycast advertisements, and adjust DNS routing policies | BGP announcements, health-check-driven DNS records, edge routing rules |

| Validate | Continuously verify performance, rollback if needed, document outcomes | Real-user monitoring, synthetic tests, change logs |

In practice, you might layer these stages by cloud region and user geography, weaving together anycast, BGP, and DNS with a centralized policy engine. A concrete example is to pair latency-based routing in Route 53 with regional health checks, while maintaining an Anycast-enabled edge network for fastest possible reach. This triad provides an actionable playbook without single-vendor lock-in. DNS failover in Route 53 is a good starting point for coupling health-driven routing with DNS-level decisions. (docs.aws.amazon.com)

Section 4: Real-world integration points and vendor-neutral considerations

To design routing strategies that survive real-world complexity, it helps to anchor decisions in signals that are not dependent on a single provider. A practical source of signals is the domain-data landscape - knowing which domains and TLDs are active in a region can inform edge placement, DNS failover strategies, and even edge cache priming. The client data services portal you see at WebAtla, including RDAP & WHOIS databases, offers a structured view of domain registrations and ownership information that can complement routing decisions when expanding into new markets. For example, you can explore the RDAP & WHOIS database to understand domain landscapes in target geographies: RDAP & WHOIS Database and browse domain catalogs by TLD at List of domains by TLD. This information, while not a substitute for network telemetry, can help calibrate geo-targeting and DNS-based policies in a data-informed way.

In your multi-cloud strategy, consider pairing these signals with edge-first routing decisions. The goal is to push traffic toward the edge location with the best combined latency, health, and price profile, then rely on DNS failover to handle regional disruptions. For context on edge-aware routing, Cloudflare’s Anycast and edge-routing materials illustrate how an identical IP can be hosted in multiple locations to minimize user distance to the service edge. Anycast DNS and related edge-routing guidance provide a solid reference point for practitioners. (cloudflare.com)

Section 5: Limitations, trade-offs, and common mistakes

No architecture is free of compromises. Latency-focused multi-cloud routing involves balancing performance with stability, complexity, and cost. Here are the most common trade-offs and missteps to avoid:

- Over-optimizing BGP at the expense of stability: Frequent path changes can destabilize traffic if not carefully staged and tested. Build change-control around routing policy updates and include rollback plans.

- Over-reliance on DNS failover without fast health signals: DNS-based failover is powerful, but it must be paired with timely health checks and sufficient TTL management to avoid slow failovers or flapping. See AWS guidance on DNS failover and health checks for best practices. (docs.aws.amazon.com)

- Misaligned TTLs and caching effects: TTLs that are too long can slow down failovers, too short TTLs can increase DNS query load and cost. Balance with observed failover latency and user experience goals.

- Edge misconfigurations in anycast deployments: Anycast can reduce latency, but requires careful monitoring of routing policies to avoid diverting traffic into unhealthy data centers.

Limitations aren’t only technical. Budget, personnel, and interoperability constraints often shape how aggressively you pursue aggressive routing strategies. The LOF framework helps gate decisions into manageable steps so teams can learn what works in their own environment.

Section 6: Expert insight and practical takeaways

Expert guidance from the edge-routing community emphasizes that the strongest latency reductions come from a layered approach: combine edge delivery with intelligent routing policies, verified by continuous measurement. In the words of Cloudflare’s team, Anycast routing is a foundational technique for reducing distance to users and improving resilience when deployed thoughtfully across a service’s footprint. This perspective reinforces the argument for a disciplined, measurement-driven strategy. Anycast DNS and Traffic Flow discussions from Cloudflare illustrate how edge proximity and resilient routing come together in practice. (cloudflare.com)

Section 7: Putting it all together - a practical, vendor-neutral plan

1) Map your cloud topology and user geography. Identify critical workloads and latency-sensitive paths across AWS, GCP, and Azure. 2) Instrument cross-cloud latency and health. Use synthetic checks and real-user telemetry to inform routing decisions, not just availability. 3) Design a layered routing policy. Use Anycast at the edge to minimize user distance, BGP controls for inter-domain routing, and DNS-based failover for region-level resilience. 4) Validate and iterate. Run controlled experiments, compare before/after latency and error rates, and document outcomes to improve the policy over time. 5) Integrate signals from domain data where useful. Domain signals - such as domain catalogs by TLD or RDAP/WHOIS data - can support geo-targeting decisions in a broader governance framework, complementing network telemetry. For readers exploring the domain-data angle, the client resources page offers practical entry points: RDAP & WHOIS Database and List of domains by TLD.

Conclusion: the road to latency-aware, resilient multi-cloud networking

Optimizing cloud routing and traffic engineering is about building a resilient, low-latency fabric that respects the realities of multi-cloud footprints and diverse user locations. It requires a disciplined blend of Anycast edge delivery, deliberate BGP optimization, and DNS-based resilience, all measured by an integrated observability layer. When you combine these elements with vendor-neutral signals and a clear governance framework, you create a network design that scales with your growth while staying within budget and risk thresholds. The framework and practices outlined here provide a concrete path forward for teams seeking to elevate cloud network performance without over-reliance on any single vendor.