Introduction: The Rising Imperative of Smart Cloud Routing

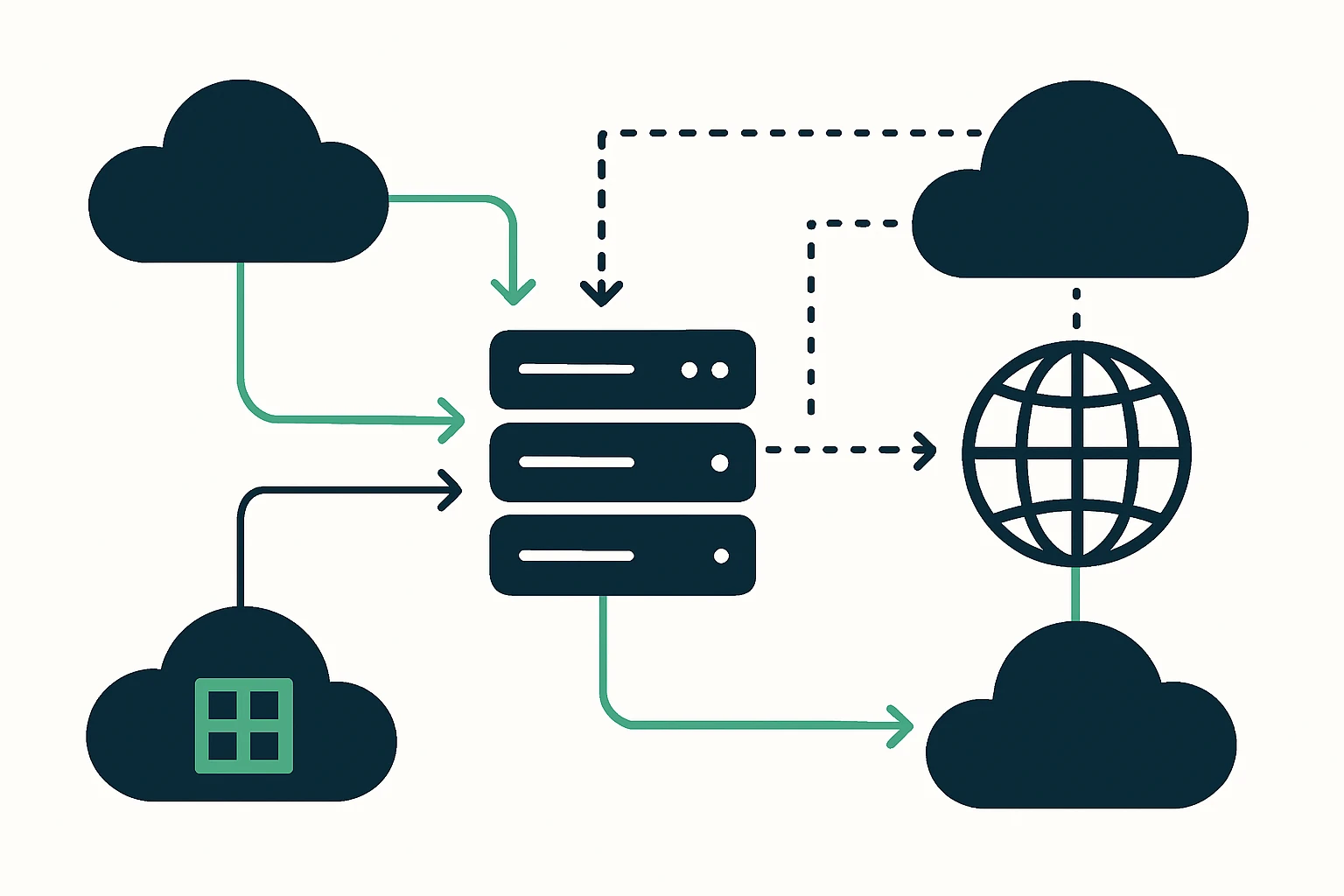

Today’s SaaS and enterprise apps span multiple cloud providers and edge locations. Users expect fast, consistent performance regardless of their geography or network path, and outages in one region should not derail global reliability. This reality puts traffic engineering and intelligent routing at the center of cloud strategy. Modern routing isn’t just about fastest-path pings, it’s about shaping how traffic moves across clouds, data centers, and edges in real time. A well-designed approach combines edge routing, anycast concepts, and resilient DNS- and BGP-based mechanisms to reduce latency and improve uptime across a multi-cloud footprint. Anycast routing, in particular, has emerged as a foundational technique for directing clients to the nearest operational edge, which can dramatically cut response times and improve perceived performance for users who are far from a single provider’s core region.

As you read, consider how your own organization balances speed, reliability, and operational complexity when routing traffic between AWS, GCP, and Azure, while supporting global users. cloud routing optimization is not a one-size-fits-all recipe, it’s a framework for aligning routing choices with business SLAs and availability requirements. For context on global edge architectures and modern traffic steering, see industry approaches that leverage anycast and DNS-based strategies to reduce latency and improve resilience.

Source-based routing choices increasingly interact with DNS and BGP policies at scale. In practice, organizations blend edge networking with DNS failover and cloud-provider routing features to ensure traffic lands where it should, even during regional disruptions. In this article, we synthesize a practical approach for multi-cloud networking that blends anycast routing, BGP optimization, and DNS failover strategies to improve cloud network performance for SaaS and DevOps teams.

Why Cloud Routing Needs Traffic Engineering

Cloud networks are no longer monolithic, they are ecosystems of interlinked regions, peering points, and APIs. Traffic engineering helps you trade off latency, availability, and cost while maintaining control over routing behavior across provider boundaries. The benefits are tangible: lower average latency for end users, faster failover when a region experiences issues, and more predictable performance under load spikes. This is especially important for services that operate across AWS, Google Cloud, and Microsoft Azure, where default routing can silently bias traffic toward a subset of regions unless deliberately configured.

Two overarching ideas drive effective cloud routing today: edge proximity and policy-driven routing. Edge proximity is about steering traffic toward the nearest healthy edge location, often achieved with anycast-based approaches. Policy-driven routing is about applying rules that reflect business priorities - such as regional compliance, cost tolerance, or service-level commitments - across the network. Taken together, these ideas enable consistent user experiences even as cloud topologies evolve.

From a practical standpoint, a resilient multi-cloud routing strategy must address three realities: dynamic health of edge locations, cross-cloud path variability, and DNS propagation delays. Each reality introduces trade-offs and choices, which we will explore through concrete techniques and a pragmatic framework.

Core Techniques for Reducing Latency Across Clouds

Anycast routing for low-latency ingress

Anycast routing enables a single IP address to be served by multiple, geographically dispersed edge locations. The network automatically selects the nearest healthy location for each request, which can dramatically reduce end-to-end latency and improve resilience against localized outages. This approach is widely used in modern CDN and edge networks to bring content closer to users, particularly for globally distributed SaaS and web apps. Cloudflare’s explanation of Anycast DNS offers practical context for how this mechanism works in practice and why it matters for latency-sensitive workloads. (cloudflare.com)

In a multi-cloud setting, anycast is often complemented by provider-specific routing and health checks. When one edge region becomes unhealthy, traffic naturally shifts to the next-best location, helping to preserve performance without manual intervention. The result is a more robust ingress path for global users, even as cloud footprints shift.

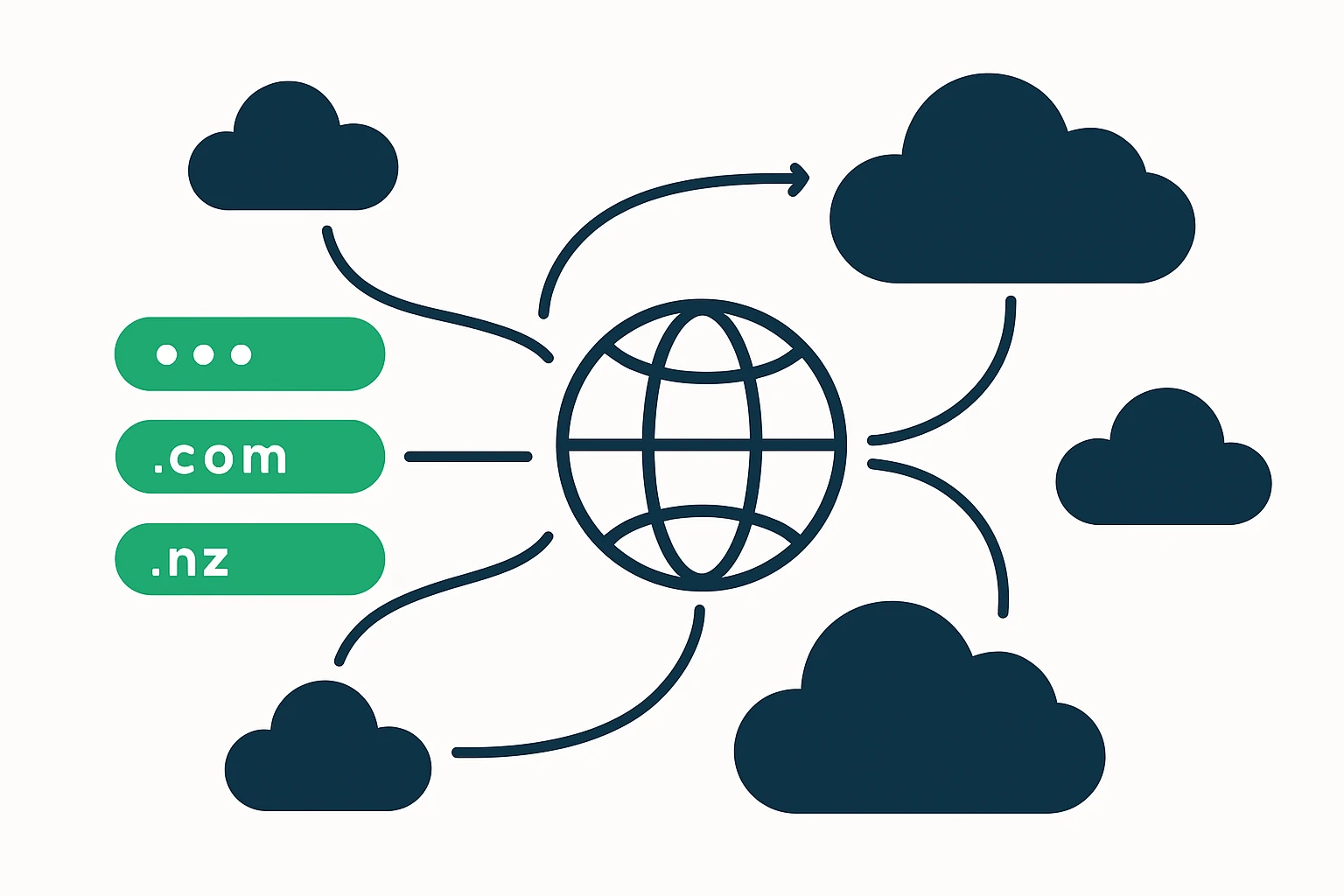

DNS failover strategies to complement edge routing

DNS failover is a classic, lightweight mechanism to redirect traffic away from failing endpoints by updating DNS records in response to health checks. While not a substitute for rapid, in-network rerouting, DNS failover can act as a valuable last line of defense when regional outages affect entire cloud regions. Official documentation from AWS demonstrates how DNS failover can be configured to route traffic away from a failed region or endpoint, enabling continued service delivery through alternate regions.

When implemented thoughtfully, DNS failover complements edge-based routing by ensuring clients resolve to endpoints with healthy reachability, even across multiple clouds. This layered approach - edge routing plus DNS failover - can substantially improve availability for globally distributed apps.

BGP optimization for cross-cloud control

BGP remains the workhorse of inter-domain routing, including cross-cloud connectivity. Optimizing BGP policies - graceful startup, prefix filtering, and route advertisements across providers - helps influence path selection and failover behavior without relying solely on DNS. While the specifics of BGP configuration depend on your network operating model, the principle is straightforward: make routing decisions that align with your performance and reliability goals while preserving reachability across cloud regions.

Practical Framework: A 4-Step Approach to Multi-Cloud Routing

- Map traffic patterns and SLAs. Document user geography, peak load windows, and latency/availability targets per service. This baseline informs where to optimize and how to evaluate trade-offs between speed and resilience.

- Design a hybrid routing strategy. Combine anycast-based edge routing for low latency with policy-based routing across clouds to direct traffic to preferred regions during outages or scaling events.

- Implement layered resilience (DNS + in-network routing). Use DNS failover in conjunction with BGP- and health-check-driven routing decisions to create multiple failure modes and reduce single points of failure.

- Monitor, verify, and iterate. Establish real-time dashboards for latency, error rates, regional outages, and DNS propagation delays. Iterate on routing policies as your cloud portfolio and user base evolve.

This four-step framework foregrounds concrete decisions, rather than abstract ideals, and is applicable to teams operating across AWS, Google Cloud, and Azure. It also aligns well with the broader trend toward multi-cloud networking strategies that emphasize interoperability, performance, and reliability across providers.

Limitations, Trade-offs, and Common Mistakes

Every routing choice carries trade-offs. A candid assessment helps teams avoid costly misconfigurations and brittle architectures. Below are widely observed limitations and missteps to watch for as you scale cloud routing efforts.

- DNS TTLs and propagation delays. DNS-based failover can introduce latency in failover scenarios due to propagation times and caching, so it should be complemented with in-network routing controls where possible.

- Health-check accuracy. Inaccurate or stale health checks can trigger premature failover or, conversely, delay recovery. Ensure health checks are aligned with real user experience and network conditions.

- Operational complexity. Combining anycast, BGP policies, and DNS failover across multiple clouds increases management overhead. Start with a minimal viable framework and expand incrementally.

- Observability gaps. Without unified telemetry across clouds, it’s easy to miss cross-cloud path anomalies. Invest in centralized visibility that correlates DNS, BGP, and latency metrics.

Expert insight

Expert insight: “Anycast routing reduces latency by steering clients toward the nearest edge, an approach that is central to modern edge networks and essential for global SaaS performance.” This principle underpins how providers and enterprises think about edge routing and traffic steering today.

Source context: Anycast routing concepts are widely discussed in industry resources and practitioner guides that explain how a single IP can be served by multiple locations to shorten the path to end users. Cloudflare - What is Anycast DNS?. (cloudflare.com)

Putting It All Together: A Practical View for SaaS and DevOps Teams

For teams delivering software across AWS, GCP, and Azure, a disciplined approach to traffic engineering translates into tangible outcomes: lower latency for global users, faster recovery from regional issues, and a clearer path to meeting reliability commitments. Google Cloud’s multi-cloud networking perspective highlights how tiered networking and cross-cloud connectivity features can empower organizations to design more adaptable, performant networks at scale.

Organizations looking to explore or validate their routing options should consider how domain and DNS resiliency intersect with edge routing. For teams managing large domain portfolios across many TLDs, a domain inventory and provenance capability becomes part of the resilience equation. To explore domain portfolio data as part of a broader resilience strategy, you can review WebAtla’s resources on domain directories and domain data services: WebAtla .website domain directory and WebAtla RDAP & WHOIS database.

Additionally, the broader cloud community continues to validate these concepts through provider-native features. AWS Route 53, for example, documents DNS failover configuration as a mechanism to route traffic away from unhealthy endpoints, reinforcing the layered approach described above. AWS Route 53 DNS Failover documentation. (docs.aws.amazon.com)

Modern cloud networks also emphasize the strategic value of multi-cloud connectivity and intelligent routing as core competencies. Google Cloud’s AI-powered multi-cloud networking initiatives illustrate how teams think about routing, edge strategy, and cross-cloud interoperability at scale. Google Cloud multi-cloud networking. (cloud.google.com)

Conclusion

Traffic engineering for multi-cloud environments is less about chasing the latest gadget and more about implementing an integrated, observable, and resilient routing posture. By combining anycast ingress, DNS failover, and thoughtful BGP policies, organizations can reduce latency, improve uptime, and deliver a more consistent user experience across AWS, GCP, and Azure. The practical framework outlined here provides a concrete path to design, deploy, and operate a routing strategy that scales with your cloud footprint and user base. As you move forward, keep your focus on measurement, adaptability, and the user’s real experience - the core drivers of successful cloud routing today.