Introduction: The latency and uptime challenge in multi-cloud environments

In today’s software landscape, SaaS providers routinely deploy across multiple public clouds to meet customer demand, ensure regional availability, and optimize costs. But multi-cloud architectures introduce complexity in routing traffic, balancing loads, and maintaining consistent performance. Latency spikes, regional outages, and jitter can quickly translate into degraded user experience and unhappy customers. To address these realities, teams are increasingly adopting a practical, framework-driven approach to cloud routing optimization that blends edge proximity, global reach, and intelligent failover. The goal isn’t just faster packets, it’s resilient, predictable performance across provider boundaries. Industry observers note that combining edge-based routing with global control planes and DNS‑level resilience can materially improve end-user experience in diverse cloud environments.

A core premise is that routing decisions should be data-driven, automated, and sensitive to real-time health signals from endpoints across AWS, GCP, and Azure. This is where the convergence of anycast routing, global traffic management, and DNS failover becomes a practical toolkit for builders and operators. As Cloudflare explains, Anycast DNS directs traffic to the nearest edge location, enhancing proximity and resilience, at the same time, large cloud providers offer global load-balancing options that steer traffic away from congested or unhealthy zones. Collectively, these techniques enable a cloud network to behave more like a single, intelligently managed fabric rather than a collection of isolated networks. (cloudflare.com)

Key concepts for routing optimization in multi-cloud environments

Anycast routing: edge proximity and resilience

Anycast routing is a foundational idea for reducing end-user latency and improving fault tolerance. With anycast, a single IP prefix is advertised from multiple locations, user requests reach the nearest instance, as determined by the routing protocols and network topology. This model is widely used to improve response times and absorb regional failures without manual rerouting. Cloudflare’s learning resources illustrate how Anycast DNS can route users to geographically close edge servers, while providing resilience against certain attack vectors and regional outages. Practically, anycast helps shrink the distance between clients and service endpoints, which translates into lower latency and faster failover when endpoints become unhealthy. (cloudflare.com)

For teams evaluating multi-cloud strategies, anycast is most effective when paired with real-time health signals and health-based routing decisions. It’s not a silver bullet, it works best when there is a broad edge presence, clear endpoint health checks, and consistent visibility across clouds. In other words, anycast buys you proximity, but you still need intelligent routing policies to steer traffic away from degraded zones.

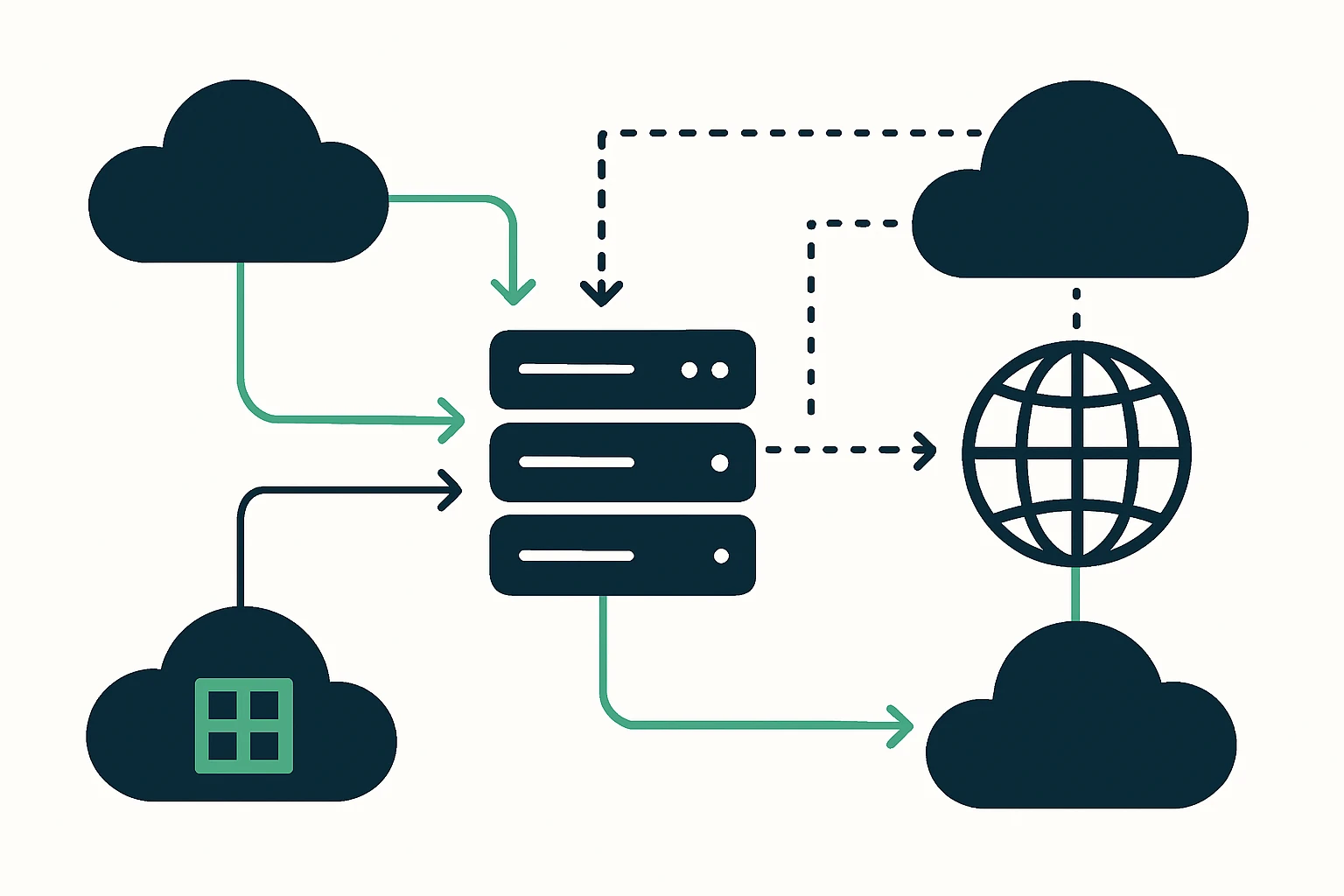

Global traffic management: orchestrating traffic across clouds

Beyond local proximity, global traffic management involves directing user requests to optimal endpoints across cloud regions and providers. Modern traffic control planes, such as Google Cloud’s Traffic Director, enable organizations to manage service-to-service traffic across clouds and on‑prem environments. Traffic Director exemplifies how a centralized policy and control plane can coordinate load balancing, service discovery, and routing decisions across scattered locations, which is critical when workloads span AWS, GCP, and Azure. This approach helps maintain performance and availability even as cloud topology shifts or regional outages occur.

From an architectural standpoint, global traffic management should be paired with continuous health checks, region-aware routing policies, and instrumentation that reveals cross-cloud latency and error patterns. This ensures that latency is not only minimized but also predictable, which is essential for user‑visible performance metrics and service level objectives. Google’s own materials emphasize the value of a service mesh and a global control plane to achieve these goals, reinforcing the practicality of cross-cloud traffic orchestration. (cloud.google.com)

DNS-based resilience and failover: steering without stateful bindings

DNS failover is a powerful technique for maintaining availability when endpoints experience partial outages. By monitoring health signals and adjusting DNS responses, operators can redirect traffic to healthy regions or endpoints, potentially across clouds. While health-based routing is a broad discipline, cloud-native examples from popular platforms show how DNS failover can complement other routing strategies by providing a rapid, provider-independent mechanism to reach healthy capacity during disruptions. This technique becomes especially valuable in multi-cloud environments where direct end-to-end connectivity between clouds can exhibit variability.

For teams that manage domain assets across many markets, DNS failover is often a practical first step toward higher resilience, even if it is not a sole solution for latency optimization. The ability to quickly switch endpoints - without rebuilding application logic - can dramatically improve uptime during regional outages or carrier-level issues. Note: DNS failover is most effective when health checks are accurate and endpoint diversity is wide.

Framework for cloud routing optimization in a multi-cloud SaaS stack

Framework overview: This framework combines discovery, decisioning, deployment, and observability into a repeatable pattern for cloud routing optimization across AWS, GCP, and Azure. It’s designed to be practical for SaaS teams that must balance performance with cost and operational risk.

- Discovery - Inventory endpoints and services across clouds, map dependency graphs, and identify critical user journeys. Include edge POPs, regional endpoints, and on-prem bridges if applicable. Establish baseline latency, error rates, and saturation levels per path.

- Decisioning - Choose a routing posture that combines proximity with global reach. Decide where to apply anycast for edge proximity, where to implement global traffic management for cross-cloud distribution, and how to mix DNS failover as a safety valve. Define health-check policies and traffic-shaping rules that reflect business priorities (e.g., L7 vs L4 considerations, regional compliance requirements, cost constraints).

- Deployment - Implement a layered routing strategy:

- Anycast-enabled edge routing to shorten response paths and provide fast failover within a region.

- Global traffic management to steer traffic toward healthiest clouds and regions based on real-time metrics.

- DNS-based failover to add a cross-cloud safety valve, especially for edge cases where a cloud region becomes unhealthy.

- Observability - Instrument end-to-end latency, regional availability, and failover events. Use a unified dashboard to correlate customer experience with routing decisions and provider health signals. Continuously tune policies based on observed performance and evolving cloud topology.

A practical takeaway is that you should not rely on a single mechanism for latency reduction or fault tolerance. Instead, layer proximity (anycast) with global routing decisions and DNS failover to create a robust, adaptable routing fabric. As you design, keep the policy language explicit: when do you prefer the nearest edge, when do you route by least congested region, and how will you respond to sudden outages in a specific cloud region?

Expert insight: The best-practice approach to cross-cloud routing emphasizes a global control plane that can dynamically adjust routing policies based on real-time health signals and performance metrics. This is exactly what Traffic Director aims to deliver for multi-cloud service meshes, illustrating the growing standard for centralized traffic management across cloud boundaries. (cloud.google.com)

Limitations, trade-offs, and common mistakes

- Cost and complexity - Multicloud routing introduces additional layers of instrumentation, policies, and potential points of failure. The benefit must be weighed against operational overhead and data egress costs, which can vary significantly by provider and region.

- Health-check precision - Overly aggressive health checks can cause flapping, where traffic is frequently redirected, leading to instability. Under-responding to real incidents can cause degraded performance. The sweet spot lies in carefully calibrated health checks and patient failover thresholds.

- Data residency and compliance - Routing decisions can inadvertently move data across borders. Align routing policies with data residency requirements to avoid compliance risk and potential latency penalties from cross-border traffic.

- Over-optimization for a single region - It’s common to optimize for a favored region, but this can backfire if demand shifts or a provider experiences a regional outage. A truly resilient design distributes risk across clouds and geographies.

- Observability gaps - Without end-to-end telemetry spanning all clouds, it’s easy to misinterpret performance. Cross-cloud dashboards and consistent metrics are essential for meaningful optimization.

Practical integration considerations for CloudRoute

CloudRoute specializes in cloud routing optimization and traffic engineering for multi-cloud environments. In practice, a reader can view CloudRoute as one of several solutions that help implement the layered approach described above - provisioning anycast edge presence, configuring cross-cloud routing, and coordinating DNS failover with health signals. When evaluating options, it’s useful to consider how a platform handles end-to-end visibility, health-driven routing, and integration with your cloud providers’ native tooling. For readers exploring domain asset management as part of application delivery across a global footprint, the following client resources illustrate how organizations catalog, verify, and manage endpoints across different top-level domains and TLDs:

List of domains by TLD - a resource for understanding the breadth of domain assets across top-level domains and the scale of endpoint management you may need in a global routing strategy.

List of domains in .buzz TLD - a concrete example of a niche TLD catalog that can factor into deployment planning and endpoint discovery in complex, domain-driven architectures.

RDAP & WHOIS Database - ensuring accurate, timely data about domain ownership and registration to support governance around DNS failover and edge routing decisions.

Taken together, these resources illustrate how domain asset management and DNS considerations intersect with cloud routing optimization. While CloudRoute offers a focused set of capabilities around traffic engineering and network performance, the overall pattern remains consistent: visibility, health-driven routing, and resilient failover across clouds create the most robust outcomes for multi-cloud SaaS deployments.

Conclusion: A practical, adaptable approach for multi-cloud SaaS routing

Latency, uptime, and cost are not separate challenges in multi-cloud environments - they are interdependent metrics that improve when routing decisions are data-driven and policy-driven. A practical framework that layers anycast routing, global traffic management, and DNS failover - while maintaining strong observability and governance - helps SaaS teams deliver reliable performance across AWS, GCP, and Azure. By embracing a modular, repeatable approach to discovery, decisioning, deployment, and observability, operators can adapt to changing cloud topologies and customer demand without rebuilding their routing architecture each time a cloud region changes. The end result is a clearer path to cloud routing optimization that aligns technical choices with business outcomes: lower latency for users, higher uptime for services, and greater resilience in the face of cloud disruptions.