Introduction: solving latency and uptime challenges in a multi-cloud world

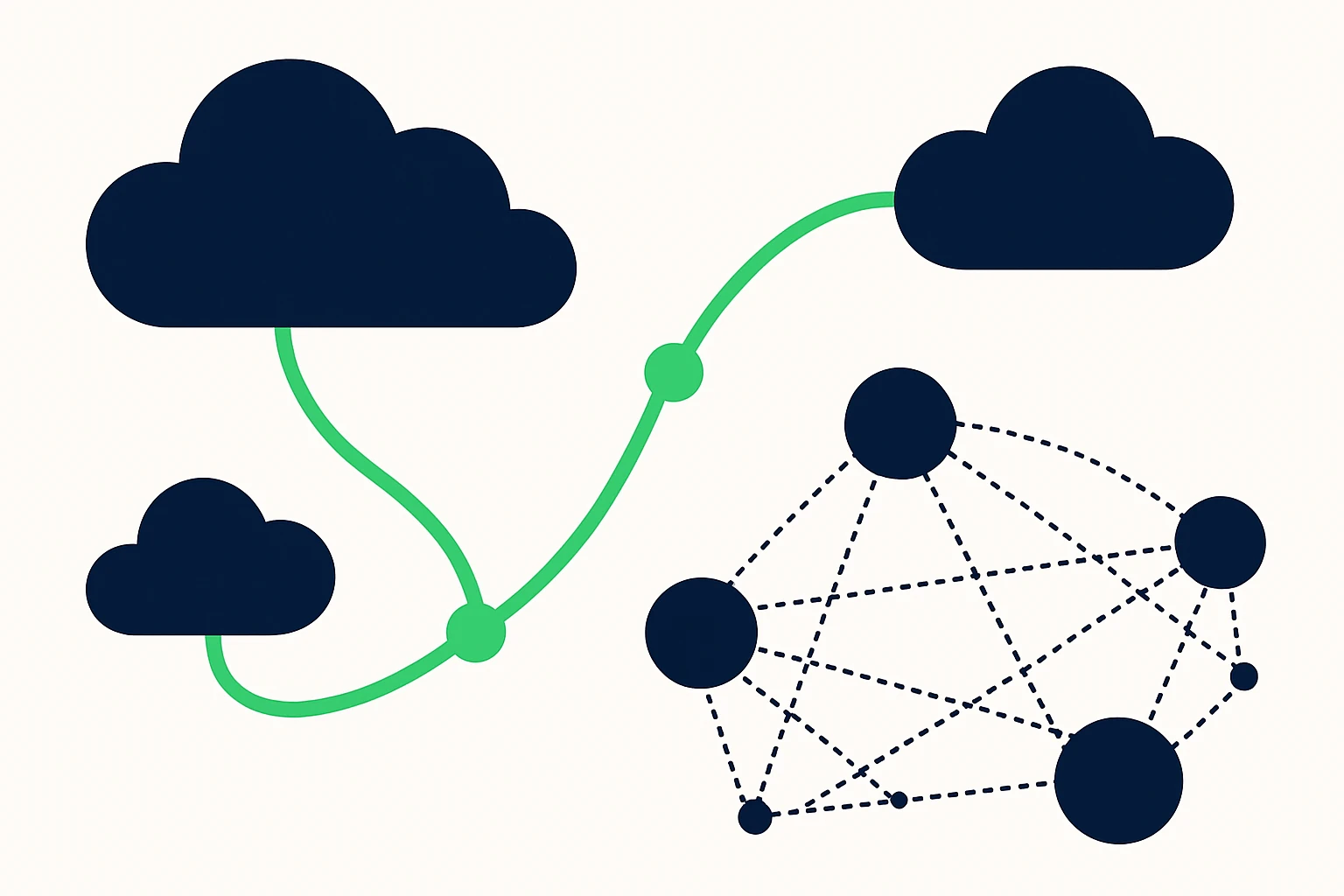

For modern SaaS, DevOps workflows, and enterprise workloads, routing data across multiple cloud providers is no longer a luxury - it's a necessity. Applications are often distributed across AWS, Google Cloud, Azure, and regional networks, making predictable latency, fault tolerance, and rapid failover essential. Static, provider-native routing alone cannot guarantee the lowest latency or the fastest recovery from outages in a globally distributed environment. Instead, a deliberate traffic engineering strategy - combining BGP optimization, Anycast routing, and DNS-based failover - can materially improve end-user experience and resilience. This perspective is grounded in the practical realities of public cloud interconnects and edge delivery, where inter-region latency and dynamic routing policies shape actual performance. Key building blocks like graceful restart for BGP, Anycast candidate paths, and health-checked DNS failover form the backbone of modern cloud routing optimization. (docs.cloud.google.com)

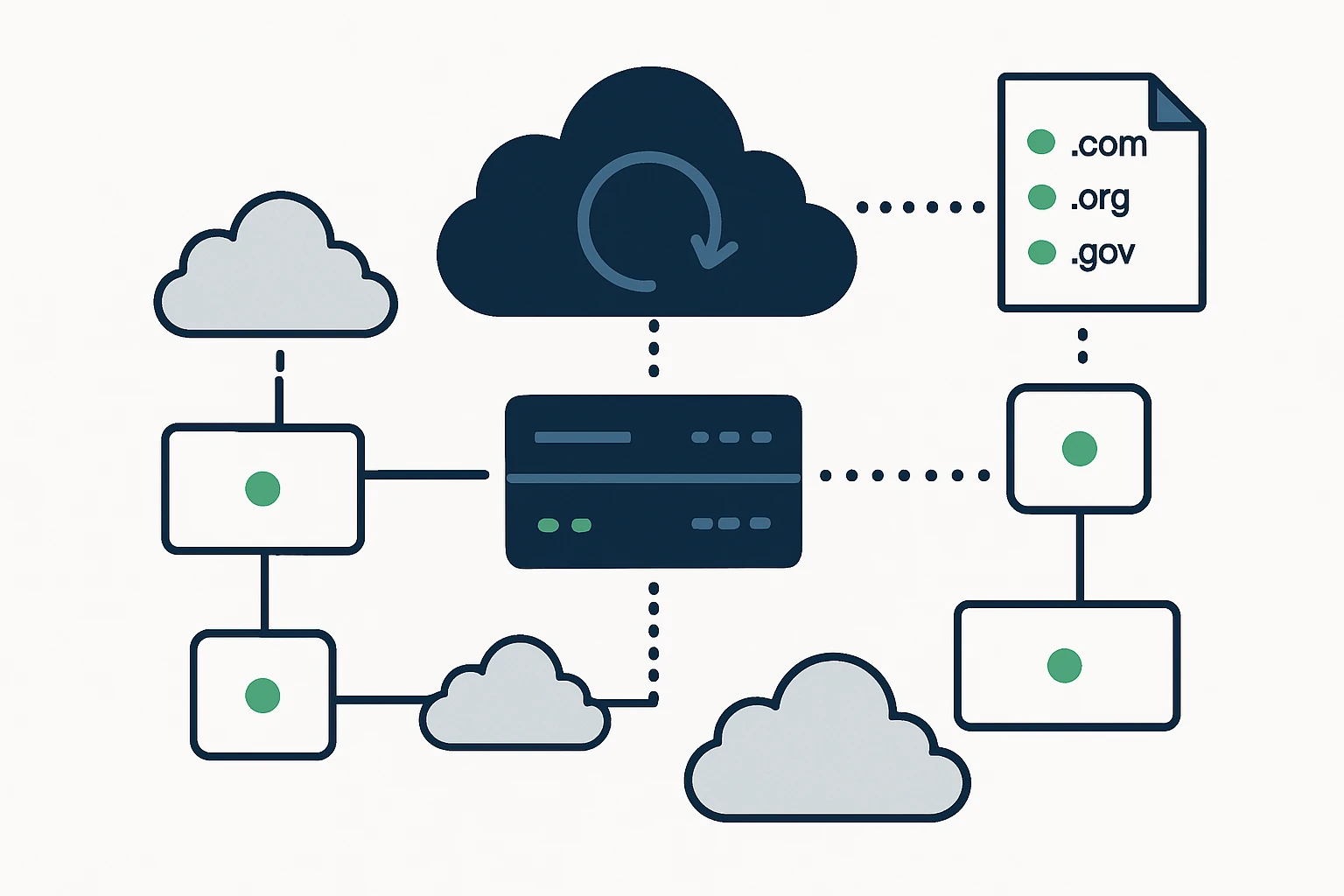

Core building blocks: BGP optimization, Anycast, and DNS failover

Anycast and latency reduction

Anycast routing advertises a single IP address from multiple locations, allowing the network to steer a user’s request to the nearest or best-performing node. This approach can dramatically reduce latency for globally distributed users because the choice of endpoint is made by the routing topology rather than by a fixed geolocation assumption. In practice, Anycast helps networks achieve CDN-like scale and resilience without relying on a single data center. However, it requires careful design to avoid edge-case instability and to ensure consistent state management across nodes. (anycast.com)

BGP optimization for cloud networks

Border Gateway Protocol (BGP) remains the workhorse for inter-domain routing across clouds and on-premises networks. Effective BGP optimization includes strategies such as local preference tuning, AS-path manipulation, and careful route filtering to steer traffic toward lower-latency paths while preserving failover capability. Modern cloud networking guidance emphasizes minimizing disruption through mechanisms like graceful restart, which allows routing sessions to recover without dropping traffic when routers or sessions reset. Successfully implemented, these practices reduce convergence time and improve overall network reliability during topology changes. (docs.cloud.google.com)

DNS-based failover for resilience

DNS failover is a fundamental technique for maintaining service availability when an endpoint becomes unhealthy. Modern DNS services support active-backup configurations, health checks, and gradual traffic shunting to backup endpoints, enabling gradual, observable failover while avoiding abrupt service disruption. When configured with proper health checks and TTLs, DNS failover can act as a lightweight first line of defense before more invasive traffic steering is required. This approach is widely documented across major cloud providers and DNS service platforms. (docs.aws.amazon.com)

A practical playbook: turning theory into action

Translate the building blocks into a repeatable workflow that balances performance, cost, and risk. The following framework helps teams structure decisions and actions across a multi-cloud footprint.

- Observe (map and measure): establish latency targets by region, service, and user segment. Collect data from multiple paths (e.g., inter-cloud interconnects, regional POPs, and edge gateways) to understand where latency or jitter originates. Use continuous monitoring to track path stability and failure rates. Supporting context: public cloud connectivity studies show that inter-region performance varies by provider and region, underscoring the need for ongoing measurement. (arxiv.org)

- Align (design routing targets): determine which services benefit most from Anycast, where DNS failover is appropriate, and which links/peers should be preferred under load. Align target endpoints across clouds (and relevant regions) to meet latency and availability goals. Overview: multi-cloud networking requires harmonizing routing policies across providers to achieve predictable performance.

- Decide (policy mix): implement a layered routing strategy combining:

- BGP optimization at the edge (local preference, measured routes)

- Anycast for critical services to route users to the nearest healthy edge

- DNS failover to move traffic away from unhealthy endpoints with controlled TTLs

- Act (deploy and validate): roll out changes incrementally, verify health checks pass, and monitor performance after each adjustment. Maintain rollback plans and guardrails to prevent oscillation or route flaps. Note: measurements after each change are essential to confirm that latency targets are met and failover happens as intended.

Structured framework: a concise, repeatable approach

Below is a compact framework teams can apply when planning and validating traffic-engineering changes across a multi-cloud environment. It reads as a checklist and a decision map you can adapt for your organization’s topology.

- Observability - latency, path stability, and endpoint health indicators across clouds

- Routing policy design - decide where to apply BGP tweaks vs. DNS-based failover vs. Anycast

- Path steering - prefer lower-latency interconnects and edge locations while maintaining redundancy

- Health and failover - implement health checks and staged failover to prevent traffic surges on backups

- Validation - run controlled experiments, measure end-user latency, and verify failover smoothness

Limitations and common mistakes: what to avoid

Even a well-designed traffic-engineering strategy can fail if misapplied. Here are the most common pitfalls to watch for, along with practical mitigations:

- Overreliance on a single mechanism - BGP optimization, Anycast, and DNS failover each solve different parts of the problem. Relying on one technique can leave gaps in latency or resilience. Use a layered approach with cross-checks between layers. See industry guidance on BGP graceful restart and DNS failover for reliability. (docs.cloud.google.com)

- DNS TTL and cache effects - aggressive TTLs may cause rapid failovers that confuse clients or overwhelm upstream resolvers, conversely, long TTLs slow down failover. Calibrate TTLs to match your failover cadence and monitoring frequency. (docs.aws.amazon.com)

- Edge-case instability with Anycast - while Anycast reduces latency, it can complicate stateful sessions and cause route flaps if not managed with stable health signals and edge coordination. Plan for session stickiness or application-layer failover when necessary. (anycast.com)

- Misaligned health checks - health checks that are too aggressive or poorly scoped can trigger unnecessary failover or mask real problems. Align checks with actual user-facing impact and service semantics. (docs.aws.amazon.com)

Client integration: how CloudRoute fits into the picture

The CloudRoute ecosystem centers on turning advanced routing insights into measurable performance gains. In practice, teams adopt a staged approach: assess current latency profiles, implement a blended routing strategy, and continuously validate improvements across regions and clouds. CloudRoute’s platform can help orchestrate BGP policy tuning, Anycast edge deployments, and DNS-based failover with unified telemetry. For practitioners looking to enrich routing datasets or test hypotheses, consider exploring diverse domain datasets to simulate traffic patterns across providers. For example, you can download list of .sk domains, download list of .world domains, and download list of .life domains to measure how geolocation and domain distribution influence routing decisions in your experiments. These datasets can serve as realistic inputs for testing geolocation-based routing or health-check-driven failover scenarios.

Case insights: what the numbers look like in practice

In real-world deployments, the combination of Anycast and DNS failover often manifests as a smoother user experience during regional outages and inter-region congestion. Public cloud performance studies consistently highlight that inter-cloud latency is not uniform and varies by provider and region, underscoring the value of proactive traffic steering and cross-cloud redundancy. When implemented thoughtfully, these patterns translate into lower user-perceived latency and faster recovery from failures, even under peak load. For teams invested in rigorous performance benchmarks, the literature on cloud network performance provides a useful backdrop for validating improvements across providers. (arxiv.org)

How to measure success: metrics that matter

To demonstrate value to leadership and operations, track a concise set of metrics over time. Key indicators include:

- End-user facing latency by region and provider

- Convergence time after a topology change or failure

- Frequency and duration of DNS failover events

- Hit rate of Anycast-selected endpoints and path stability

- Uptime and recovery time for critical services during regional outages

Technical appendix: quick reference and recommended practices

The following concise guidelines summarize practical steps for teams starting a multi-cloud routing optimization program:

- Document latency targets per region and service, and baseline current performance before changes.

- Implement BGP graceful restart where supported to minimize disruption during control-plane failures.

- Deploy Anycast for front-facing, stateless services to reduce hop count and improve failover responsiveness.

- Use DNS failover with health checks to route around unhealthy endpoints, and tune TTLs to balance speed and stability.

- Validate changes with staged tests and controlled rollouts to avoid destabilizing production traffic.

Conclusion: a disciplined, layered approach to multi-cloud reliability

As applications continue to span multiple clouds, a disciplined traffic-engineering strategy becomes a competitive differentiator. By combining BGP optimization, Anycast routing, and DNS failover, teams can reduce latency for global users, shorten recovery times after outages, and create a more resilient cloud-network fabric. The path from theory to practice is not inherently simple, but with a structured playbook, robust monitoring, and careful risk management, it is possible to achieve measurable improvements in cloud network performance across providers. For organizations that want to accelerate their practice, a guided framework anchored in observability, policy design, and staged deployment offers the clearest route to repeatable, auditable results.